Druid

This page covers how to migrate data from Druid to Rockset in a straightforward way. This includes:

- Copying Druid segments and metadata using the popular open source

dump-segmenttool - Uploading Druid data to Amazon S3 using the AWS CLI

- Creating an S3 Integration to securely connect buckets in your AWS account with Rockset

Copy Druid Segments and Metadata

The dump-segment tool can be used to copy Druid segments and metadata to Rockset. This tool may not be an exact copy of your data and can potentially exclude some metadata or complex metrics values.

To run the tool, point it at a segment directory and specify an output file:

java -classpath

"/my/druid/lib/\*" -Ddruid.extensions.loadList="[]" org.apache.druid.cli.Main \

tools dump-segment \

--directory /home/druid/path/to/segment/ \

--out /home/druid/output.txt

By default, dump-segment will copy the rows in each Druid segment as newline-separate JSON objects and include the column data. You can provide command line arguments to extract specify data types, like metadata or bitmaps, or to format the exported data.

| Argument | Description | Required? |

|---|---|---|

| --directory file | Directory containing segment data. This could be generated by unzipping an "index.zip" from deep storage. | yes |

| --output file | File to write to, or omit to write to stdout. | yes |

| --dump TYPE | Dump either 'rows' (default), 'metadata', or 'bitmaps' | no |

| --column columnName | Column to include. Specify multiple times for multiple columns, or omit to include all columns. | no |

| --filter json | JSON-encoded query filter. Omit to include all rows. Only used if dumping rows. | no |

| --time-iso8601 | Format __time column in ISO8601 format rather than long. Only used if dumping rows. | no |

| --decompress-bitmaps | Dump bitmaps as arrays rather than base64-encoded compressed bitmaps. Only used if dumping bitmaps. | no |

Uploading Druid Data to S3

For the following steps, you must have write permissions for the AWS S3 bucket.

If you do not have access, please invite your AWS administrator to Rockset.

The steps below show how to upload a Druid data file into S3 using the AWS Command Line Interface (CLI). This enables you to upload a single large file, up to 5 TBs in size using the multipart upload. If you have a smaller dataset in Druid, a dataset that does not exceed 160 GB in size, you can upload it directly into the Amazon S3 console.

If you have larger scale data and decide to go the AWS CLI route, you will first need to install and configure the AWS CLI.

You can then initiate a multipart upload. Multipart upload is a three-step process: You initiate the upload, you upload the object parts, and after you have uploaded all the parts, you complete the multipart upload. Upon receiving the complete multipart upload request, Amazon S3 constructs the object from the uploaded parts, and you can then access the object just as you would any other object in your bucket.

Upload a Part

When requesting for a part to be uploaded, you must specify a part number. You can choose any part number between 1 and 10,000. A part number uniquely identifies a part and its position in the object you are uploading. The part number that you choose doesn’t need to be in a consecutive sequence (for example, it can be 1, 5, and 14). If you upload a new part using the same part number as a previously uploaded part, the previously uploaded part is overwritten.

The following command uploads the first part in a multipart upload initiated with the create-multipart-upload command:

aws s3api upload-part --bucket my-bucket --key 'multipart/01' --part-number 1 --body part01 --upload-id "dfRtDYU0WWCCcH43C3WFbkRONycyCpTJJvxu2i5GYkZljF.Yxwh6XG7WfS2vC4to6HiV6Yjlx.cph0gtNBtJ8P3URCSbB7rjxI5iEwVDmgaXZOGgkk5nVTW16HOQ5l0R"

The body option takes the name or path of a local file for upload (do not use the file:// prefix). The minimum part size is 5 MB. Upload ID is returned by create-multipart-upload and can also be retrieved with list-multipart-uploads. Bucket and key are specified when you create the multipart upload.

The output provided:

{

"ETag": "\"e868e0f4719e394144ef36531ee6824c\""

}

Save the ETag value of each part for later. They are required to complete the multipart upload.

Complete the Multipart Upload

When you complete a multipart upload, Amazon S3 creates an object by concatenating the parts in ascending order based on the part number. If any object metadata was provided in the initiate multipart upload request, Amazon S3 associates that metadata with the object. After a successful complete request, the parts no longer exist.

The following command completes a multipart upload for the key multipart/01 in the bucket my-bucket:

aws s3api complete-multipart-upload --multipart-upload file://mpustruct --bucket my-bucket --key 'multipart/01' --upload-id dfRtDYU0WWCCcH43C3WFbkRONycyCpTJJvxu2i5GYkZljF.Yxwh6XG7WfS2vC4to6HiV6Yjlx.cph0gtNBtJ8P3URCSbB7rjxI5iEwVDmgaXZOGgkk5nVTW16HOQ5l0R

The multipart upload option in the above command takes a JSON structure that describes the parts of the multipart upload that should be reassembled into the complete file. In this example, the file:// prefix is used to load the JSON structure from a file in the local folder named mpustruct.

mpustruct:

{

"Parts": [

{

"ETag": "e868e0f4719e394144ef36531ee6824c",

"PartNumber": 1

},

{

"ETag": "6bb2b12753d66fe86da4998aa33fffb0",

"PartNumber": 2

},

{

"ETag": "d0a0112e841abec9c9ec83406f0159c8",

"PartNumber": 3

}

]

}

The ETag value for each part is provided as output each time you upload a part using the upload-part command and can also be retrieved by calling list-parts or calculated by taking the MD5 checksum of each part.

Output:

{

"ETag": "\"3944a9f7a4faab7f78788ff6210f63f0-3\"",

"Bucket": "my-bucket",

"Location": "https://my-bucket.s3.amazonaws.com/multipart%2F01",

"Key": "multipart/01"

}

Create an S3 Integration

The steps below show how to set up an Amazon S3 integration using AWS Cross-Account IAM Roles and AWS Access Keys (deprecated). An integration can provide access to one or more S3 buckets within your AWS account. You can use an integration to create collections that sync data from your S3 buckets.

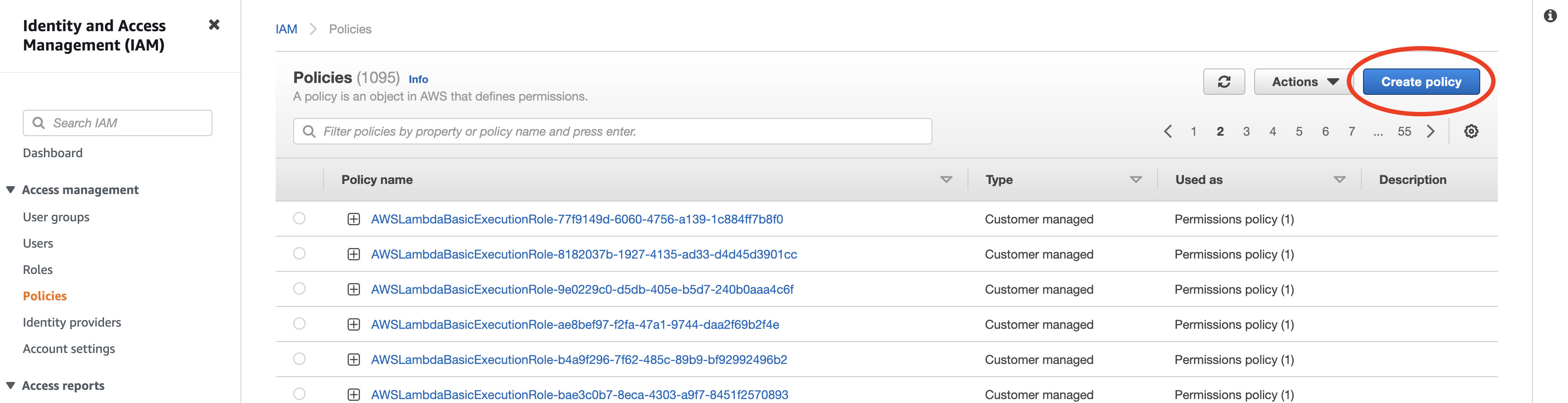

Step 1: Configure AWS IAM Policy

-

Navigate to the IAM Service in the AWS Management Console.

-

Set up a new policy by navigating to Policies and clicking Create policy.

If you already have a policy set up for Rockset, you may update that existing policy.

For more details, refer to AWS Documentation on IAM Policies.

- Set up read-only access to your S3 bucket. You can switch to the

JSONtab and paste the policy shown below. You must replace<your-bucket>with the name of your S3 bucket. If you already have a Rockset policy set up, you can add the body of theStatementattribute to it.

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": ["s3:List*", "s3:GetObject"],

"Resource": ["arn:aws:s3:::<your-bucket>", "arn:aws:s3:::<your-bucket>/*"]

}

]

}

Configuration Tip

If you are attempting to restrict the policy to subdirectory /a/b, update the Resource object as follows:

"Resource": ["arn:aws:s3:::<your-bucket>", "arn:aws:s3:::<your-bucket>/a/b/*"]

- Optionally, if you have an S3 bucket that is encrypted with a KMS key, append the following statement to the

Statementattribute above.

{

"Sid": "Decrypt",

"Effect": "Allow",

"Action": ["kms:Decrypt"],

"Resource": ["arn:aws:kms:<your-region>:<your-account>:key/<your-key-id>"]

}

- Save the newly created or updated policy and give it a descriptive name. You will attach this policy to a user or role in the next step.

Why these Permissions?

s3:List*— Required. Rockset uses thes3:ListBucketands3:ListAllMyBucketspermissions to

read bucket and object metadata.s3:GetObject— Required to retrieve objects from your Amazon S3 bucket.

Advanced Permissions

You can set up permissions for multiple buckets, or some specific paths by modifying the Resource ARNs. The format of the ARN for S3 is as follows: arn:aws:s3:::bucket_name/key_name.

You can substitute the following resources in the policy above to grant access to multiple buckets or prefixes as shown below:

- All paths under mybucket/salesdata:

arn:aws:s3:::mybucketarn:aws:s3:::mybucket/salesdata/*

- All buckets starting with

sales:arn:aws:s3:::sales*arn:aws:s3:::sales*/*

- All buckets in your account:

arn:aws:s3:::*arn:aws:s3:::*/*

For more details on how to specify a resource path, refer to AWS documentation on S3 ARNs.

Step 2: Configure Role / Access Key

There are two methods by which you can grant Rockset permissions to access your AWS resource. Although Access Keys are supported, Cross-Account roles are strongly recommended as they are more secure and easier to manage.

AWS Cross-Account IAM Role

The most secure way to grant Rockset access to your AWS account involves giving Rockset's account cross-account access to your AWS account. To do so, you need to create an IAM Role that assumes your newly created policy on Rockset's behalf.

You will need information from the Rockset Console to create and save this integration.

-

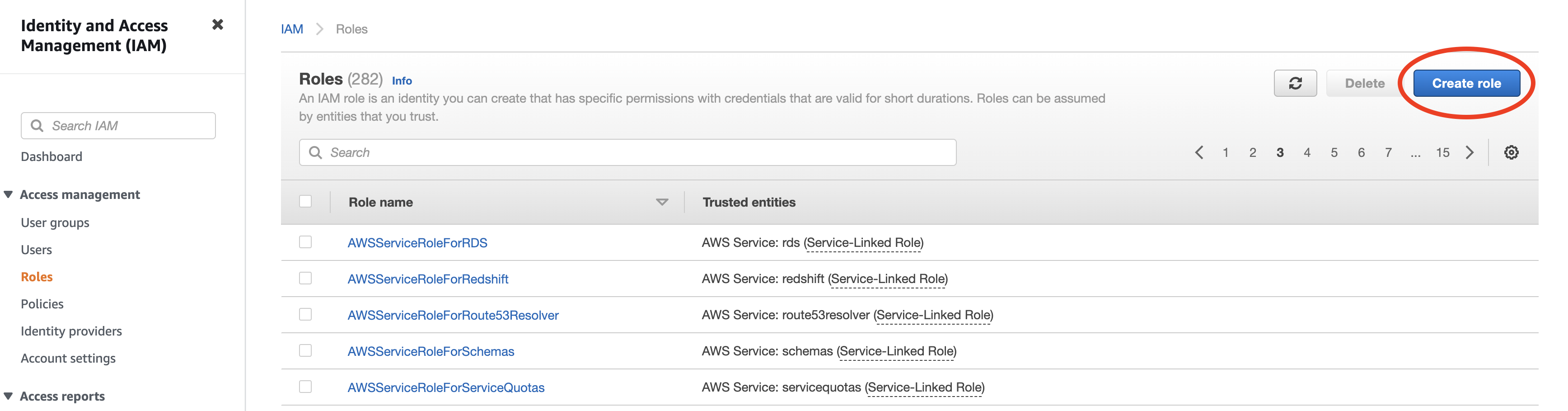

Navigate to the IAM service in the AWS Management Console.

-

Setup a new role by navigating to Roles and clicking "Create role".

If you already have a role for Rockset set up, you may re-use it and either add or update the above policy directly.

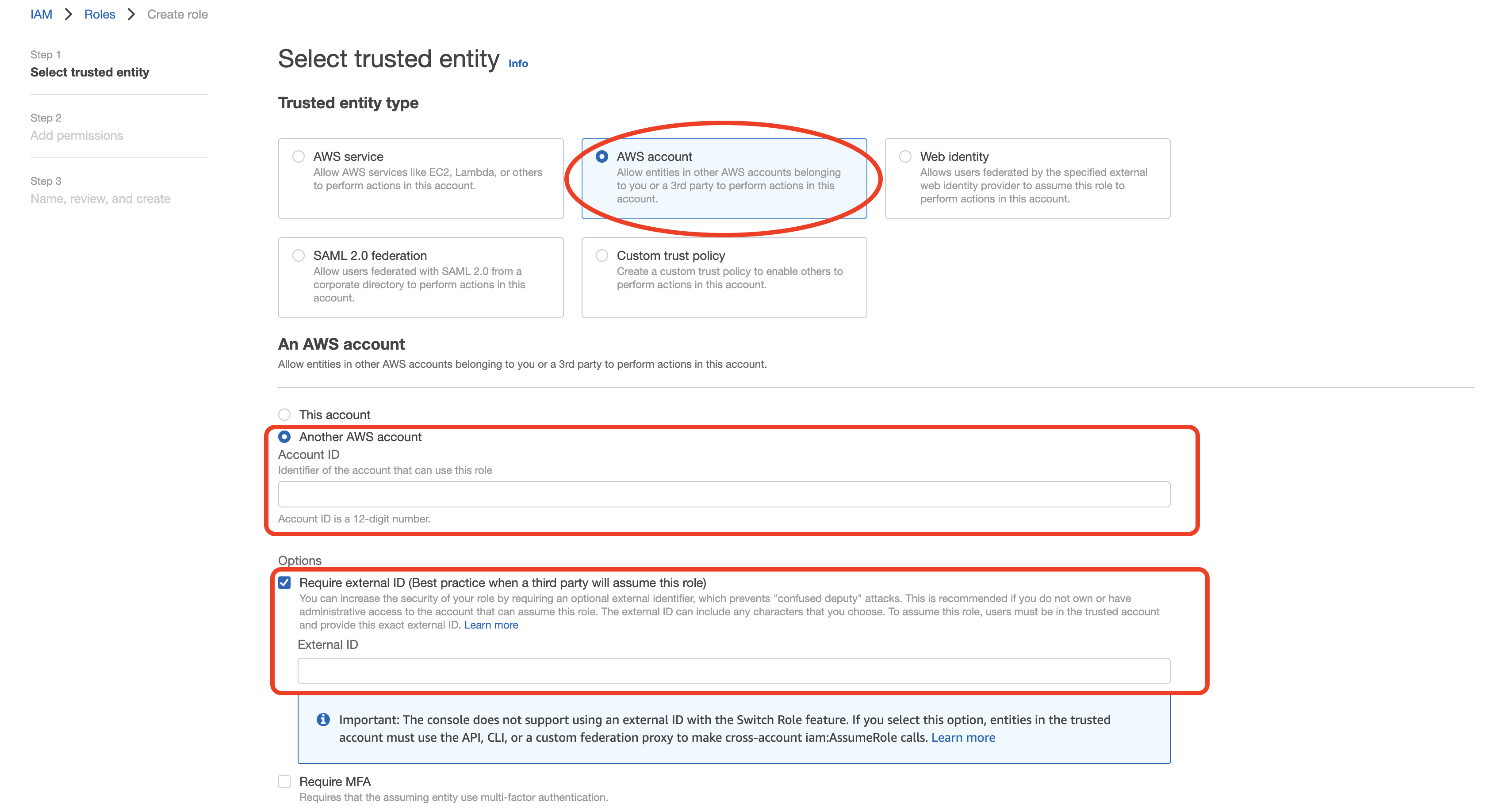

-

Select "Another AWS account" as type of trusted entity, and tick the box for "Require External ID". Fill in the Account ID and External ID fields with the values (Rockset Account ID and External ID respectively) found on the Integration page of the Rockset Console (under the Cross-Account Role Option). Click to continue.

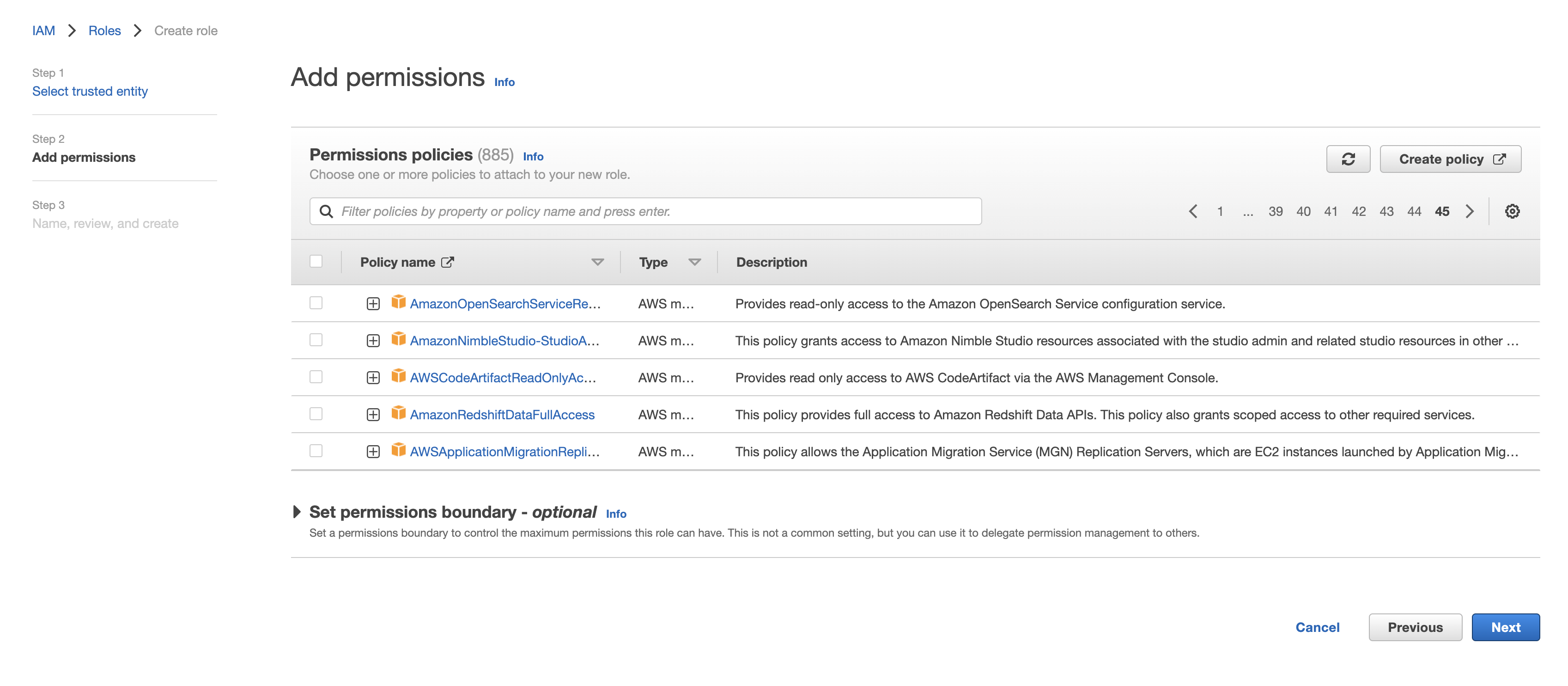

-

Choose the policy created for this role in Step 1 (or follow Step 1 now to create the policy if needed). Click to continue.

-

Optionally, add any tags and click "Next". Name the role descriptively (such as rockset-role), then record the Role ARN for the Rockset integration in the Rockset Console.

AWS Access Key (deprecated)

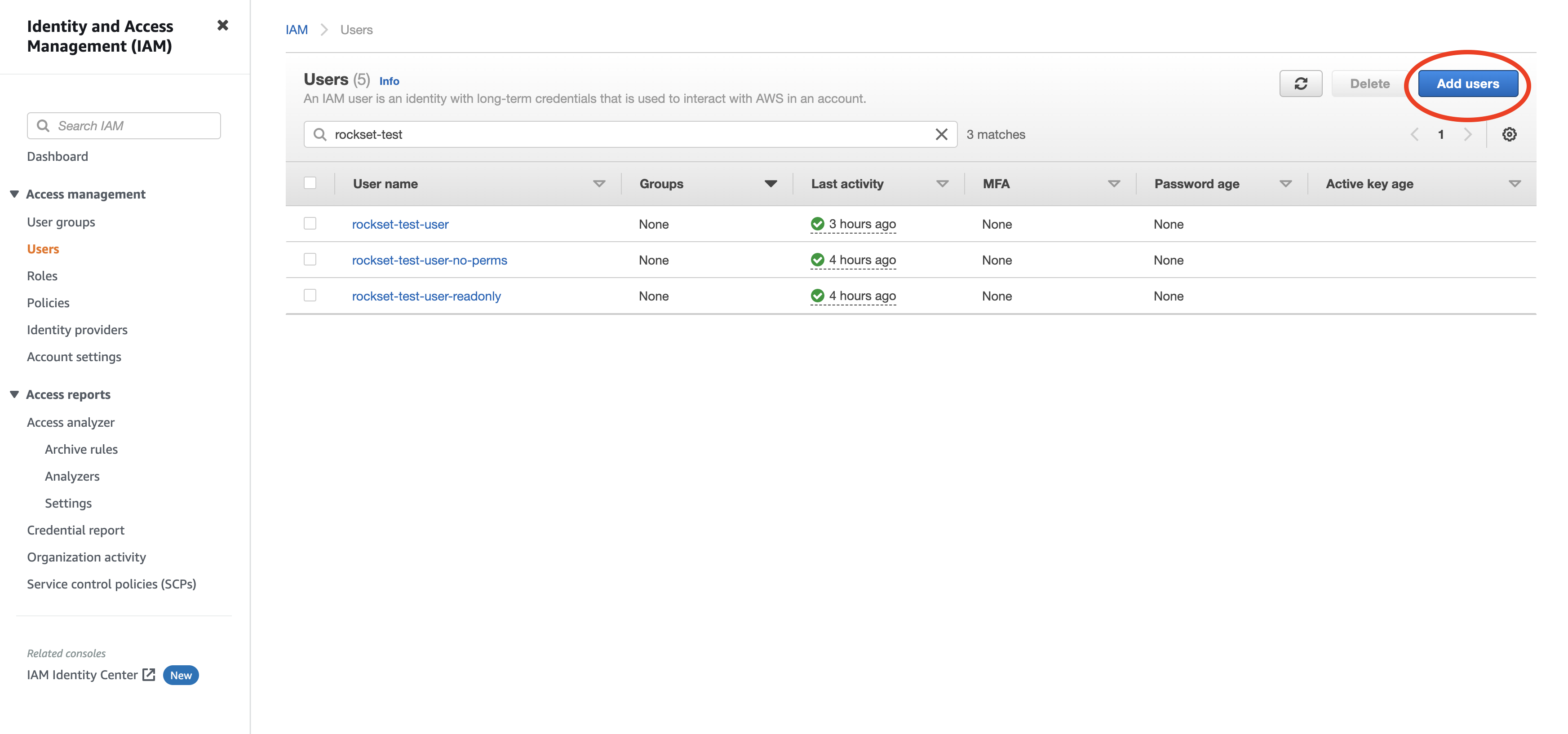

Navigate to the IAM service in the AWS Management Console.

-

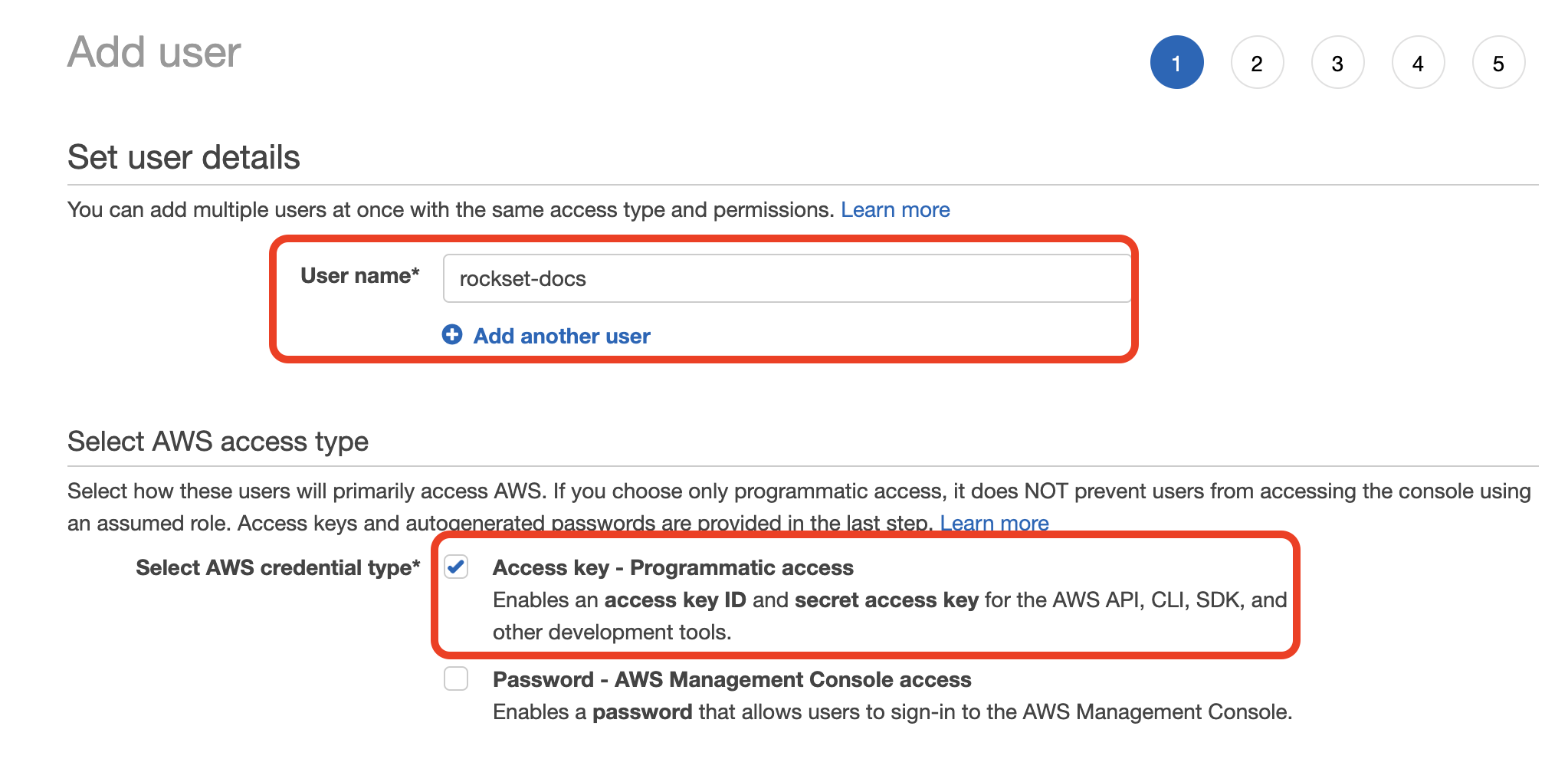

Create a new user by navigating to Users and clicking "Add User".

-

Enter a name for the user and check the "Programmatic access" option. Click to continue.

-

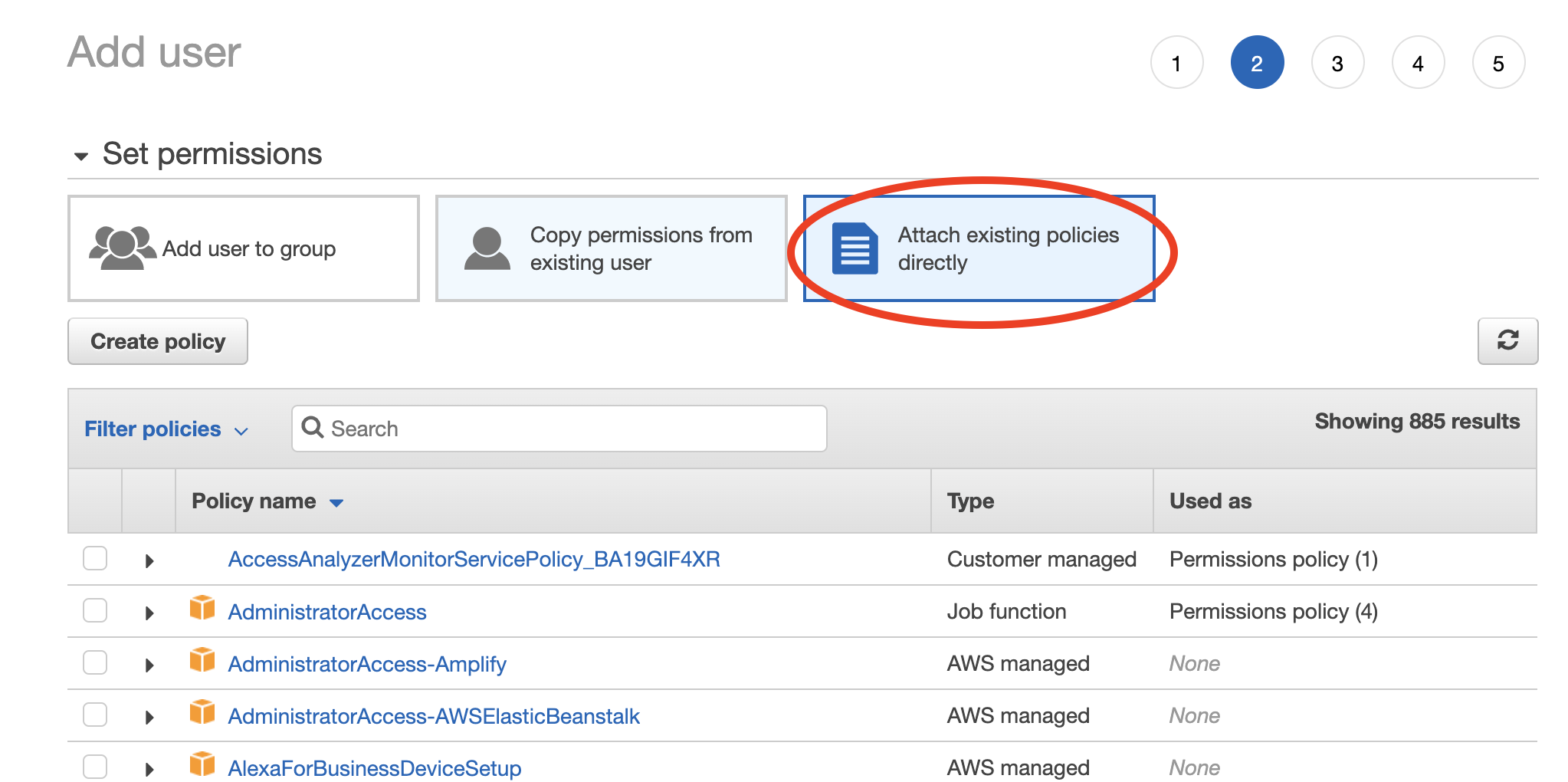

Choose "Attach existing policies directly" then select the policy you created in Step 1. Click through the remaining steps to finish creating the user.

-

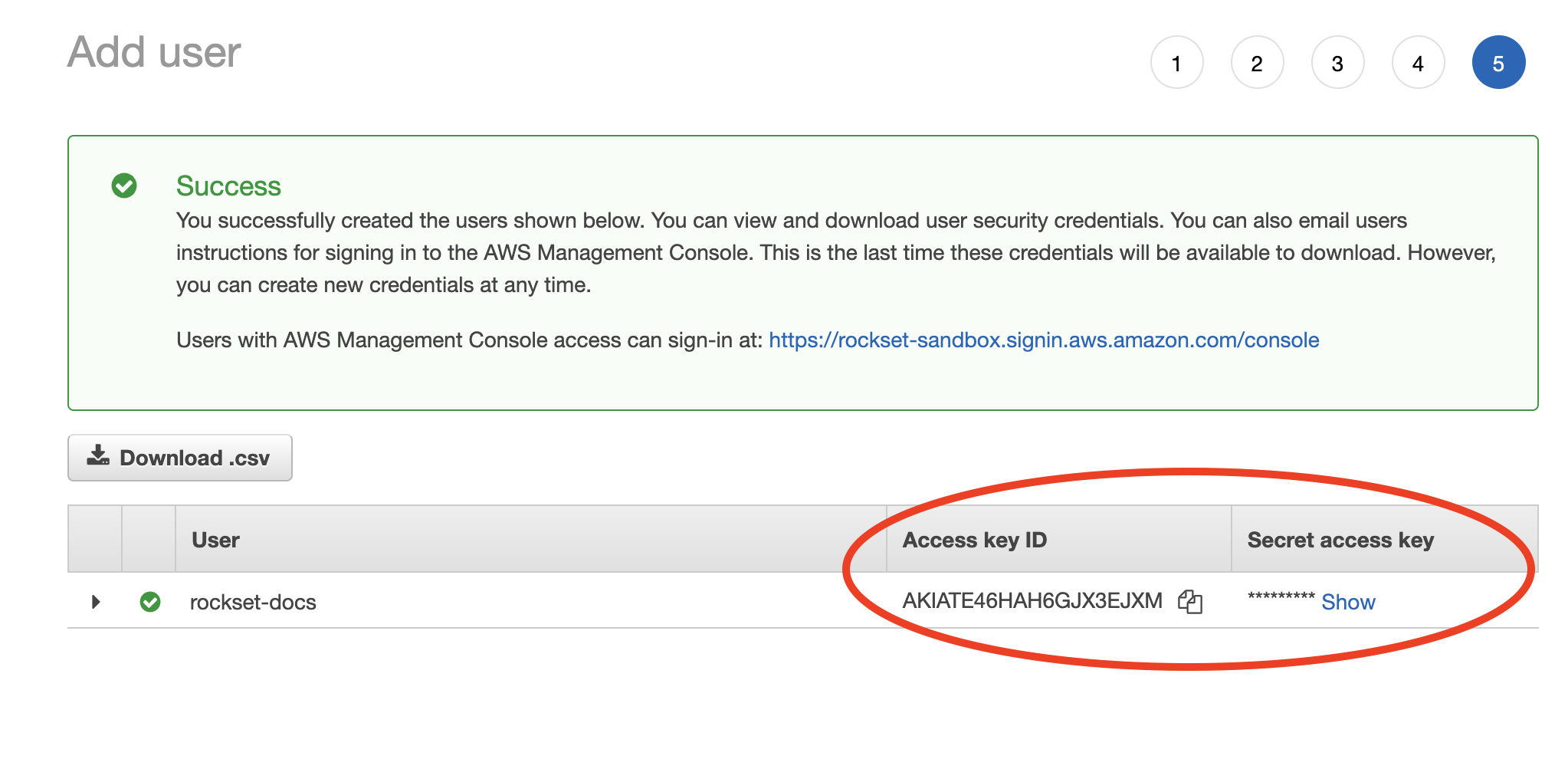

When the new user is successfully created you should see the Access key ID and Secret access key displayed on the screen.

-

Record both these values in the Rockset Console.

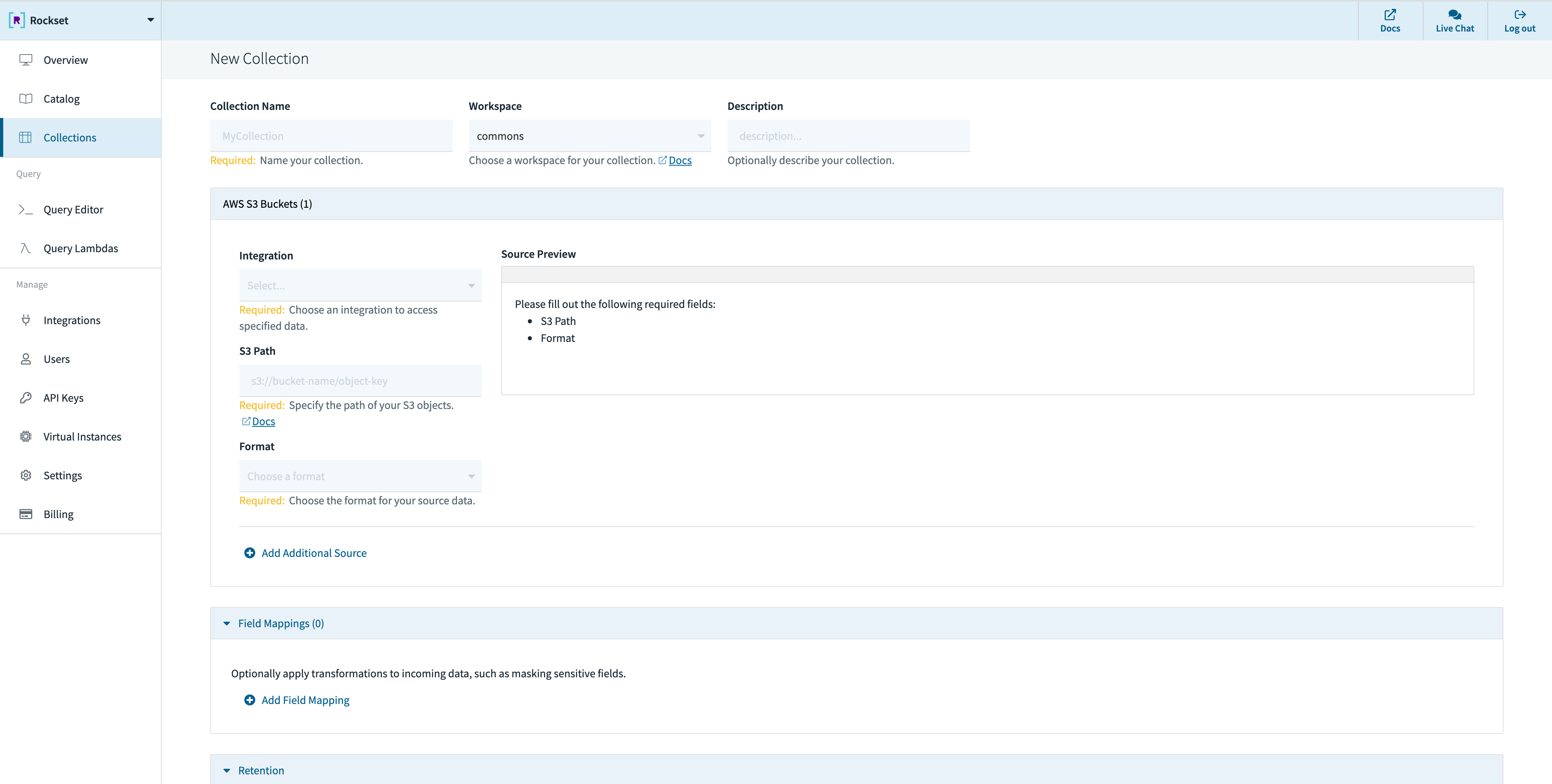

Create a Collection

When creating a collection in Rockset, you can specify an S3 path (see details below) from which Rockset will ingest data. Rockset will continuously monitor for updates and ingest any new objects. Deleting an object from the source bucket will not remove that data from Rockset.

You can create a collection from a S3 source in the Collections tab of the Rockset Console.

These operations can also be performed using any of the Rockset client libraries, the Rockset API, or the Rockset CLI.

Specifying S3 Path

You can ingest all data in a bucket by specifying just the bucket name or restrict to a subset of the objects in the bucket by specifying an additional prefix or pattern.

By default, if the S3 path has no special characters, a prefix match is performed. However, if any of the following special characters are used in the S3 path, it triggers pattern matching semantics.

?matches one character*matches zero or more characters**matches zero or more directories in a path{myparam}matches a single path parameter and extracts its value into a field in_meta.s3named myparam{myparam:<regex>}matches the specified regular expression and extracts its value into a field in_meta.s3named myparam

The following examples explain exactly how the patterns can be used:

s3://bucket/xyz- uses prefix match, matches all files that have a prefix of xyz.s3://bucket/xyz/t?st.csv- matches com/test.csv but also com/tast.csv or com/txst.csv in the bucket.s3://bucket/xyz/*.csv- matches all .csv files in the xyz directory in the bucket.s3://bucket/xyz/**/test.json- matches all test.json files in any subdirectory under the xyz path in the bucket.s3://bucket/05/2018/**/*.json- matches all .json files underneath any subdirectory under the /05/2018/ path in the bucket.s3://bucket/{month}/{year}/**/*.json- matches the pattern according to the above rules. In addition, it extracts the value of the matched path segments{month},{year}as fields of the form_meta.s3.monthand_meta.s3.yearassociated with each document.s3://bucket/{timestamp:\d+}/**/*.json- matches the pattern according to the above rules. In addition, it extracts thetimestamppath segment value if it matches the regular expression\d+and places the value extracted into_meta.s3.timestampassociated with each document.

Best Practices

- While Rockset doesn't have an enforced upper bound on object sizes, Rockset recommends that objects in your S3 bucket stay between 5MiB and 10GiB

- Large objects (>10GiB) can't take advantage of Rockset's parallel processing mechanism and will result in slow ingestion. Splitting large objects into a series of smaller ones will yield higher throughput and faster recovery caused by any intermittent issues

- As a rule of thumb if you are going to split large objects we recommend aiming for ~1GiB in size. A readily available tool to split line-oriented data formats (like JSON or CSV) in all Unix systems is Split

- The same applies to grouping multiple large files into a single archive (like zip or tar). Rockset recommends uploading the large files to an S3 bucket without using any archiving tool so that you can take advantage of the higher throughput

- Too many small files (

<5MiB) will result in a lot of GET & PUT operations in S3, increasing the cost charged by AWS for your S3 usage - If you are using Parquet files, Rockset recommends they do not exceed 3 GiB, since that has shown to cause several issues in the past that might result in a complete failure of the bulk ingest operation

Updated 3 months ago