Can I Do SQL-Style Joins in Elasticsearch?

September 11, 2023

Elasticsearch is an open-source, distributed JSON-based search and analytics engine built using Apache Lucene with the purpose of providing fast real-time search functionality. It is a NoSQL data store that is document-oriented, scalable, and schemaless by default. Elasticsearch is designed to work at scale with large data sets. As a search engine, it provides fast indexing and search capabilities that can be horizontally scaled across multiple nodes.

Shameless plug: Rockset is a real-time indexing database in the cloud. It automatically builds indexes that are optimized not just for search but also aggregations and joins, making it fast and easy for your applications to query data, regardless of where it comes from and what format it is in. But this post is about highlighting some workarounds, in case you really want to do SQL-style joins in Elasticsearch.

Why Do Data Relationships Matter?

We live in a highly connected world where handling data relationships is important. Relational databases are good at handling relationships, but with constantly changing business requirements, the fixed schema of these databases results in scalability and performance issues. The use of NoSQL data stores is becoming increasingly popular due to their ability to tackle a number of challenges associated with the traditional data handling approaches.

Enterprises are continually dealing with complex data structures where aggregations, joins, and filtering capabilities are required to analyze the data. With the explosion of unstructured data, there are a growing number of use cases requiring the joining of data from different sources for data analytics purposes.

While joins are primarily a SQL concept, they are equally important in the NoSQL world as well. SQL-style joins are not supported in Elasticsearch as first-class citizens. This article will discuss how to define relationships in Elasticsearch using various techniques such as denormalizing, application-side joins, nested documents, and parent-child relationships. It will also explore the use cases and challenges associated with each approach.

How to Deal with Relationships in Elasticsearch

Because Elasticsearch is not a relational database, joins do not exist as a native functionality like in an SQL database. It focuses more on search efficiency as opposed to storage efficiency. The stored data is practically flattened out or denormalized to drive fast search use cases.

There are multiple ways to define relationships in Elasticsearch. Based on your use case, you can select one of the below techniques in Elasticsearch to model your data:

- One-to-one relationships: Object mapping

- One-to-many relationships: Nested documents and the parent-child model

- Many-to-many relationships: Denormalizing and application-side joins

One-to-one object mappings are simple and will not be discussed much here. The remainder of this blog will cover the other two scenarios in more detail.

Want to learn more about Joins in Elasticsearch? Check out our post on common use cases

Managing Your Data Model in Elasticsearch

There are four common approaches to managing data in Elasticsearch:

- Denormalization

- Application-side joins

- Nested objects

- Parent-child relationships

Denormalization

Denormalization provides the best query search performance in Elasticsearch, since joining data sets at query time isn’t necessary. Each document is independent and contains all the required data, thus eliminating the need for expensive join operations.

With denormalization, the data is stored in a flattened structure at the time of indexing. Though this increases the document size and results in the storage of duplicate data in each document. Disk space is not an expensive commodity and thus little cause for concern.

Use Cases for Denormalization

While working with distributed systems, having to join data sets across the network can introduce significant latencies. You can avoid these expensive join operations by denormalizing data. Many-to-many relationships can be handled by data flattening.

Challenges with Data Denormalization

- Duplication of data into flattened documents requires additional storage space.

- Managing data in a flattened structure incurs additional overhead for data sets that are relational in nature.

- From a programming perspective, denormalization requires additional engineering overhead. You will need to write additional code to flatten the data stored in multiple relational tables and map it to a single object in Elasticsearch.

- Denormalizing data is not a good idea if your data changes frequently. In such cases denormalization would require updating all of the documents when any subset of the data were to change and so should be avoided.

- The indexing operation takes longer with flattened data sets since more data is being indexed. If your data changes frequently, this would indicate that your indexing rate is higher, which can cause cluster performance issues.

Application-Side Joins

Application-side joins can be used when there is a need to maintain the relationship between documents. The data is stored in separate indices, and join operations can be performed from the application side during query time. This does, however, entail running additional queries at search time from your application to join documents.

Use Cases for Application-Side Joins

Application-side joins ensure that data remains normalized. Modifications are done in one place, and there is no need to constantly update your documents. Data redundancy is minimized with this approach. This method works well when there are fewer documents and data changes are less frequent.

Challenges with Application-Side Joins

- The application needs to execute multiple queries to join documents at search time. If the data set has many consumers, you will need to execute the same set of queries multiple times, which can lead to performance issues. This approach, therefore, does not leverage the real power of Elasticsearch.

- This approach results in complexity at the implementation level. It requires writing additional code at the application level to implement join operations to establish a relationship among documents.

Nested Objects

The nested approach can be used if you need to maintain the relationship of each object in the array. Nested documents are internally stored as separate Lucene documents and can be joined at query time. They are index-time joins, where multiple Lucene documents are stored in a single block. From the application perspective, the block looks like a single Elasticsearch document. Querying is therefore relatively faster, since all the data resides in the same object. Nested documents deal with one-to-many relationships.

Use Cases for Nested Documents

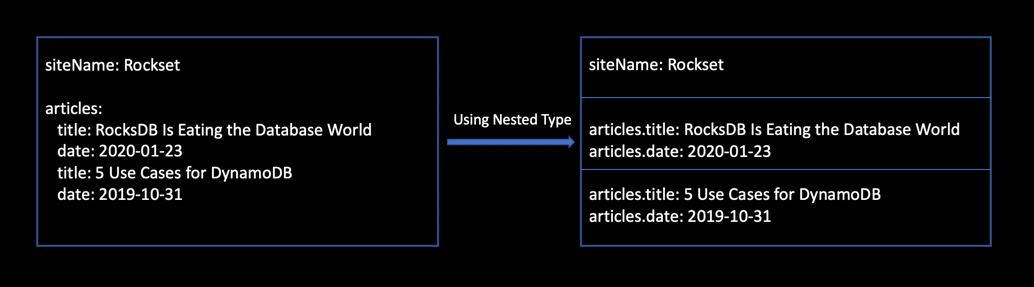

Creating nested documents is preferred when your documents contain arrays of objects. Figure 1 below shows how the nested type in Elasticsearch allows arrays of objects to be internally indexed as separate Lucene documents. Lucene has no concept of inner objects, hence it is interesting to see how Elasticsearch internally transforms the original document into flattened multi-valued fields.

One advantage of using nested queries is that it won’t do cross-object matches, hence unexpected match results are avoided. It is aware of object boundaries, making the searches more accurate.

Challenges with Nested Objects

- The root object and its nested objects must be completely reindexed in order to add/update/delete a nested object. In other words, a child record update will result in reindexing the entire document.

- Nested documents cannot be accessed directly. They can only be accessed by its related root document.

- Search requests return the entire document instead of returning only the nested documents that match the search query.

- If your data set changes frequently, using nested documents will result in a large number of updates.

Parent-Child Relationships

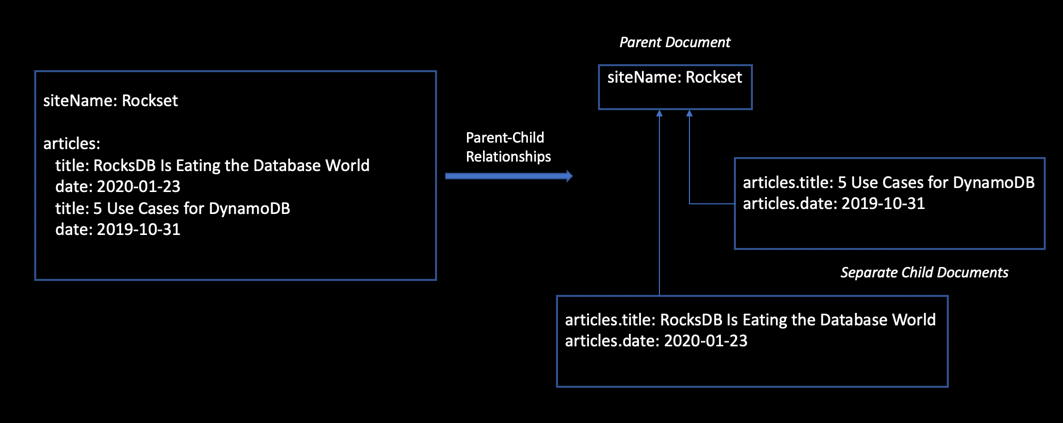

Parent-child relationships leverage the join datatype in order to completely separate objects with relationships into individual documents—parent and child. This enables you to store documents in a relational structure in separate Elasticsearch documents that can be updated separately.

Parent-child relationships are beneficial when the documents need to be updated often. This approach is therefore ideal for scenarios when the data changes frequently. Basically, you separate out the base document into multiple documents containing parent and child. This allows both the parent and child documents to be indexed/updated/deleted independently of one another.

Searching in Parent and Child Documents

To optimize Elasticsearch performance during indexing and searching, the general recommendation is to ensure that the document size is not large. You can leverage the parent-child model to break down your document into separate documents.

However, there are some challenges with implementing this. Parent and child documents need to be routed to the same shard so that joining them during query time will be in-memory and efficient. The parent ID needs to be used as the routing value for the child document. The _parent field provides Elasticsearch with the ID and type of the parent document, which internally lets it route the child documents to the same shard as the parent document.

Elasticsearch allows you to search from complex JSON objects. This, however, requires a thorough understanding of the data structure to efficiently query from it. The parent-child model leverages multiple filters to simplify the search functionality:

has_childquery

Returns parent documents that have child documents matching the query.

has_parentquery

Accepts a parent and returns child documents that associated parents have matched.

inner_hitsquery

Fetches relevant children information from the has_child query.

Figure 2 shows how you can use the parent-child model to demonstrate one-to-many relationships. The child documents can be added/removed/updated without impacting the parent. The same holds true for the parent document, which can be updated without reindexing the children.

Challenges with Parent-Child Relationships

- Queries are more expensive and memory-intensive because of the join operation.

- There is an overhead to parent-child constructs, since they are separate documents that must be joined at query time.

- Need to ensure that the parent and all its children exist on the same shard.

- Storing documents with parent-child relationships involves implementation complexity.

Conclusion

Choosing the right Elasticsearch data modeling design is critical for application performance and maintainability. When designing your data model in Elasticsearch, it is important to note the various pros and cons of each of the four modeling methods discussed herein.

In this article, we explored how nested objects and parent-child relationships enable SQL-like join operations in Elasticsearch. You can also implement custom logic in your application to handle relationships with application-side joins. For use cases in which you need to join multiple data sets in Elasticsearch, you can ingest and load both these data sets into the Elasticsearch index to enable performant querying.

Out of the box, Elasticsearch does not have joins as in an SQL database. While there are potential workarounds for establishing relationships in your documents, it is important to be aware of the challenges each of these approaches presents.

Using Native SQL Joins with Rockset

When there is a need to combine multiple data sets for real-time analytics, a database that provides native SQL joins can handle this use case better. Like Elasticsearch, Rockset is used as an indexing layer on data from databases, event streams, and data lakes, permitting schemaless ingest from these sources. Unlike Elasticsearch, Rockset provides the ability to query with full-featured SQL, including joins, giving you greater flexibility in how you can use your data.