Joining Data in DynamoDB and S3 for Live, Ad-Hoc Analysis

January 10, 2020

Performing ad-hoc analysis is a daily part of life for most data scientists and analysts on operations teams.

They are often held back by not having direct and immediate access to their data because the data might not be in a data warehouse or it might be stored across multiple systems in different formats.

This typically means that a data engineer will need to help develop pipelines and tables that can be accessed in order for the analysts to do their work.

However, even here there is still a problem.

Data engineers are usually backed-up with the amount of work they need to do and often data for ad-hoc analysis might not be a priority. This leads to analysts and data scientists either doing nothing or finagling their own data pipeline. This takes their time away from what they should be focused on.

Even if data engineers could help develop pipelines, the time required for new data to get through the pipeline could prevent operations analysts from analyzing data as it happens.

This was, and honestly is still a major problem in large companies.

Getting access to data.

Luckily there are lots of great tools today to fix this! To demonstrate we will be using a free online data set that comes from Citi Bike in New York City, as well as S3, DynamoDB and Rockset, a real-time cloud data store.

Citi Bike Data, S3 and DynamoDB

To set up this data we will be using the CSV data from Citi Bike ride data as well as the station data that is here.

We will be loading these data sets into two different AWS services. Specifically we will be using DynamoDB and S3.

This will allow us to demonstrate the fact that sometimes it can be difficult to analyze data from both of these systems in the same query engine. In addition, the station data for DynamoDB is stored in JSON format which works well with DynamoDB. This is also because the station data is closer to live and seems to update every 30 seconds to 1 minute, whereas the CSV data for the actual bike rides is updated once a month. We will see how we can bring this near-real-time station data into our analysis without building out complicated data infrastructure.

Having these data sets in two different systems will also demonstrate where tools can come in handy. Rockset, for example, has the ability to easily join across different data sources such as DynamoDB and S3.

As a data scientist or analysts, this can make it easier to perform ad-hoc analysis without needing to have the data transformed and pulled into a data warehouse first.

That being said, let’s start looking into this Citi Bike data.

Loading Data Without a Data Pipeline

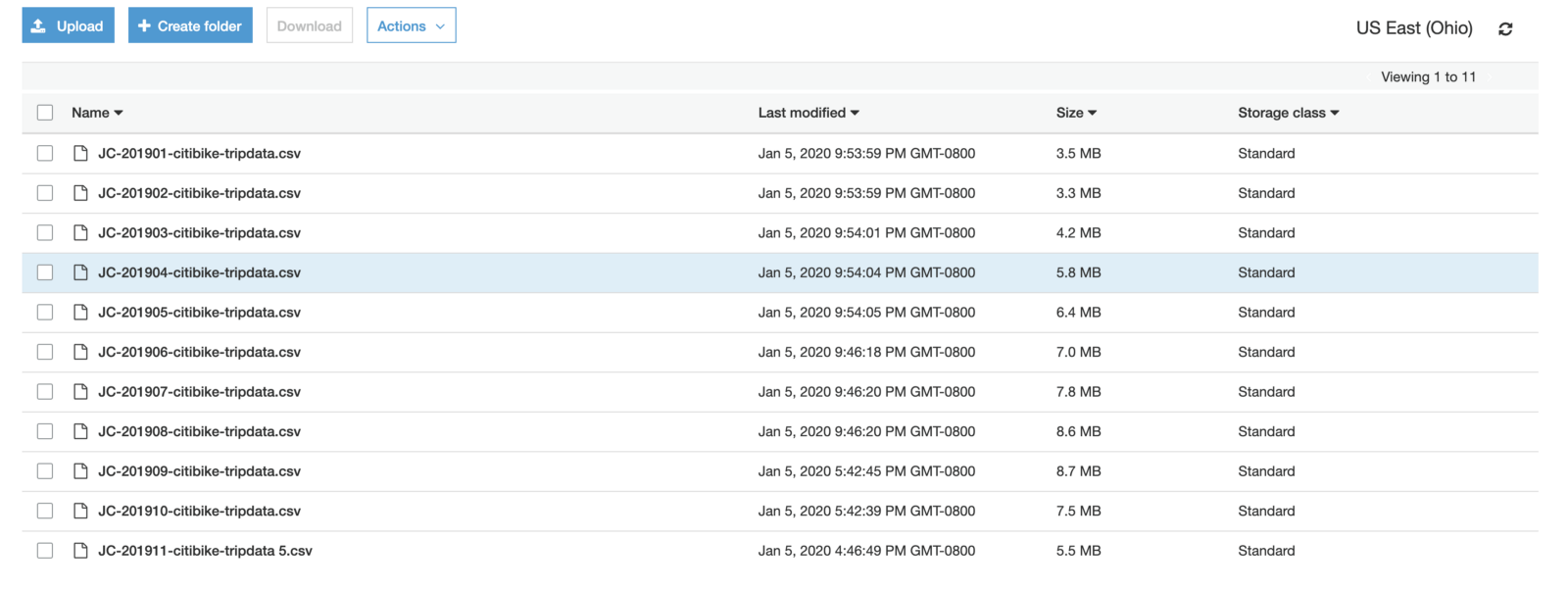

The ride data is stored in a monthly file as a CSV, which means we need to pull in each file in order to get the whole year.

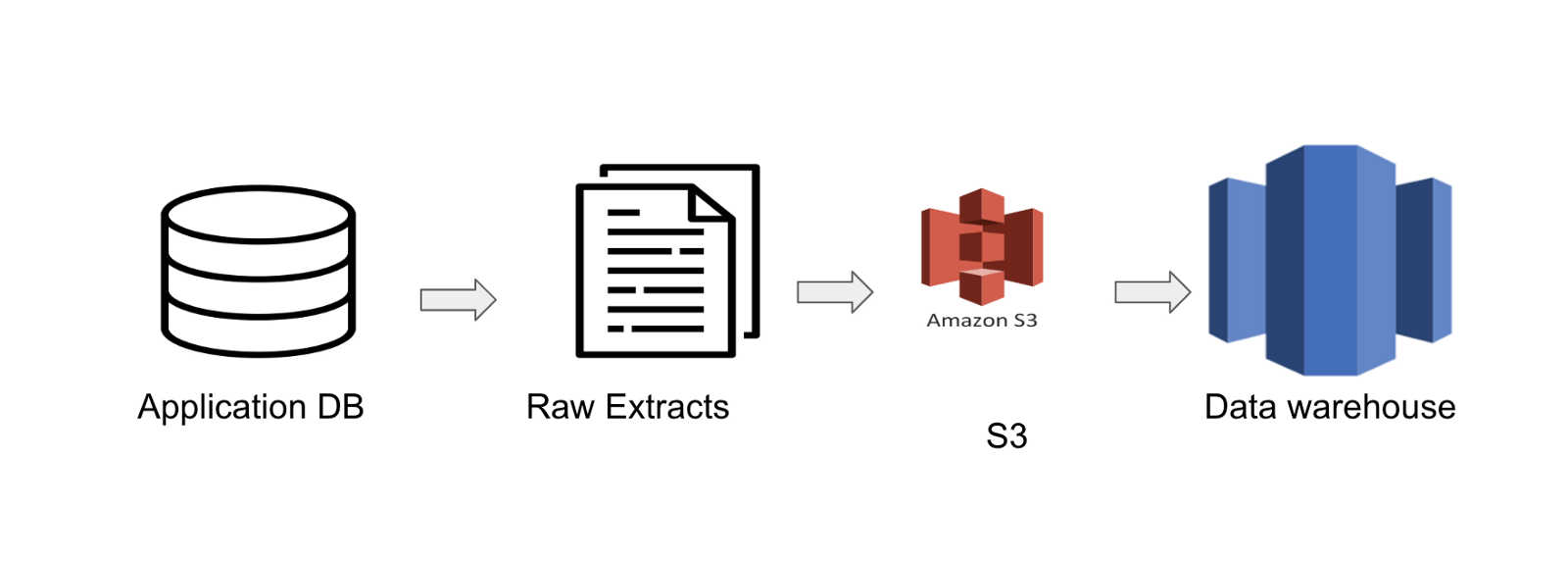

For those who are used to the typical data engineering process, you would need to set up a pipeline that automatically checks the S3 bucket for new data and then loads it into a data warehouse like Redshift.

The data would follow a similar path to the one laid out below.

This means you need a data engineer to set up a pipeline.

However, in this case I didn’t need to set up any sort of data warehouse. Instead, I just loaded the files into S3 and then Rockset treated it all as one table.

Even though there are 3 different files, Rockset treats each folder as its own table. Kind of similar to some other data storage systems that store their data in “partitions” that are just essentially folders.

Not only that, it didn’t freak out when you added a new column to the end. Instead, it just nulled out the rows that didn’t have said column. This is great because it allows for new columns to be added without a data engineer needing to update a pipeline.

Analyzing Citi Bike Data

Generally, a good way to start is just to simply plot data out to make sure it somewhat makes sense (just in case you have bad data).

We will start with the CSVs stored in S3, and we will graph out usage of the bikes month over month.

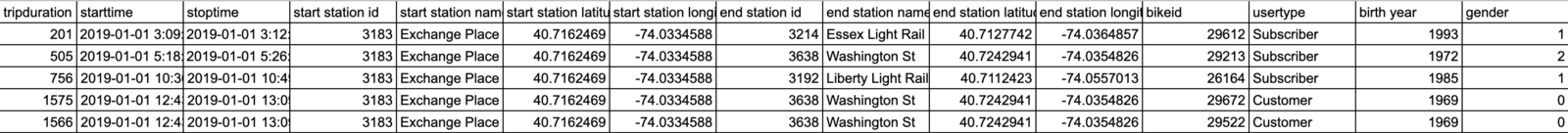

Ride Data Example:

To start off, we will just graph the ride data from September 2019 to November 2019. Below is all you will need for this query.

Embedded content: https://gist.github.com/bAcheron/2a8613be13653d25126d69e512552716

One thing you will notice is that I case the datetime back to a string. This is because Rockset stores datetime date more like an object.

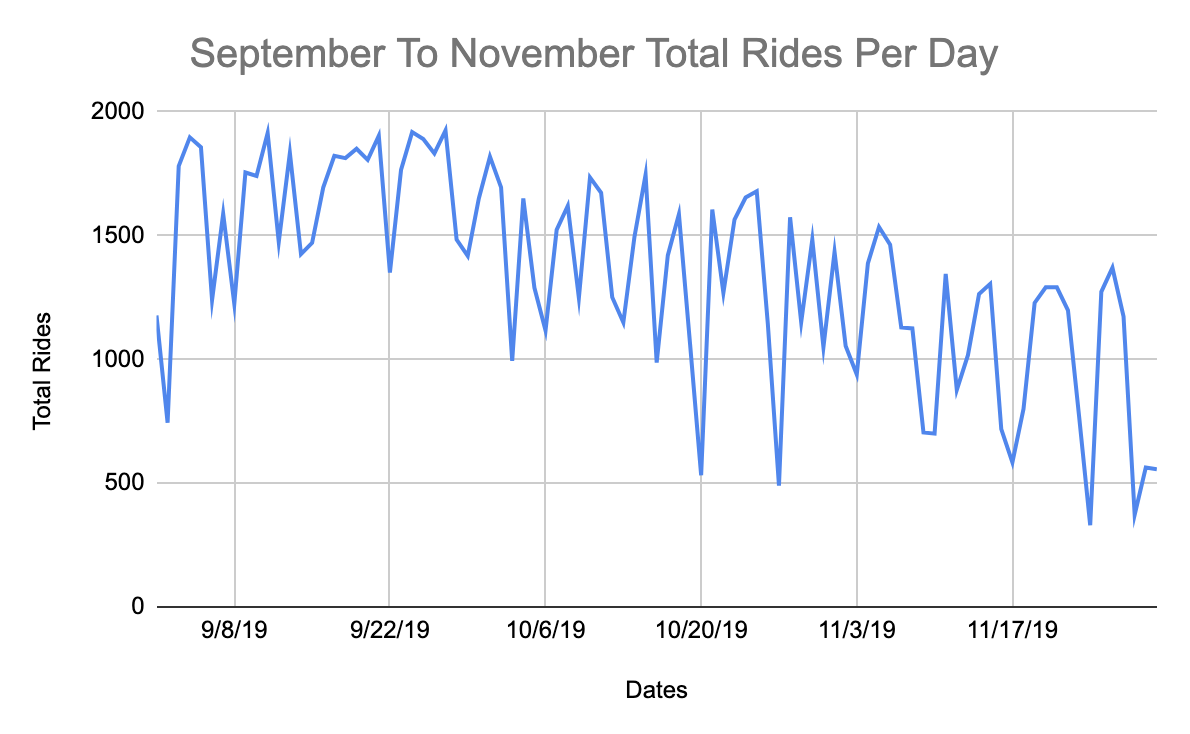

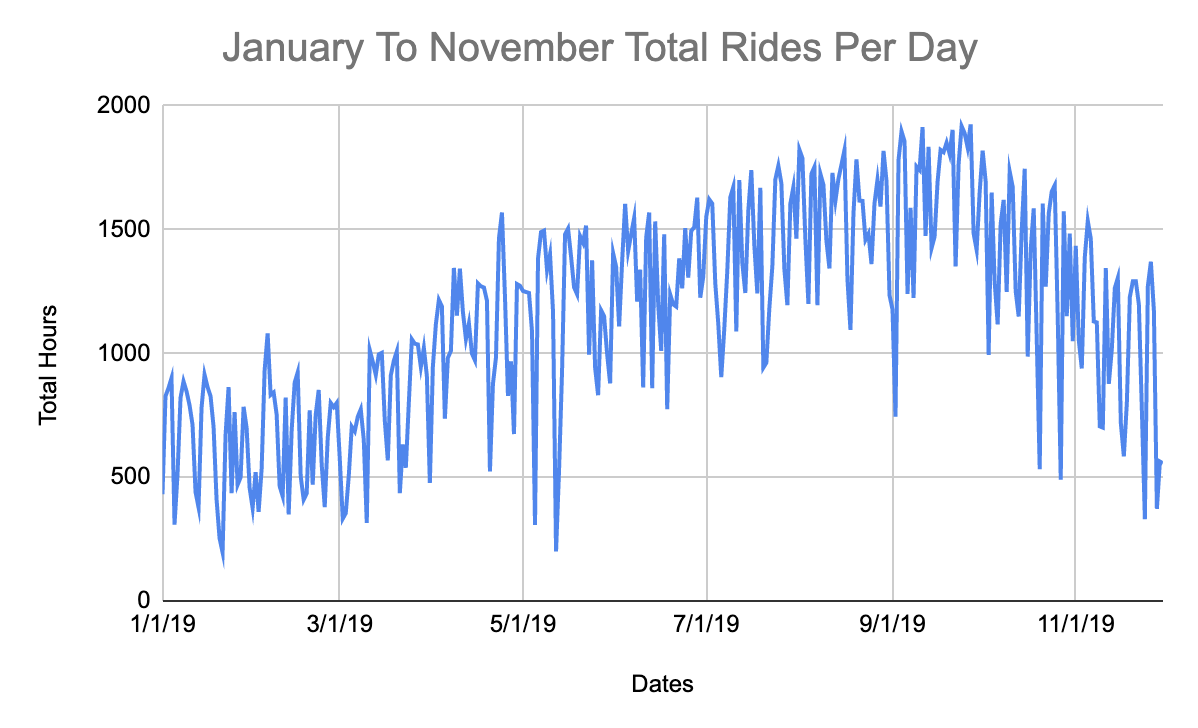

Taking that data I plotted it and you can see reasonable usage patterns. If we really wanted to dig into this we would probably look into what was driving the dips to see if there was some sort of pattern but for now we are just trying to see the general trend.

Let’s say you want to load more historical data because this data seems pretty consistent.

Again, no need to load more data into a data warehouse. You can just upload the data into S3 and it will automatically be picked up.

You can look at the graphs below, you will see the history looking further back.

From the perspective of an analyst or data scientist, this is great because I didn’t need a data engineer to create a pipeline to answer my question about the data trend.

Looking at the chart above, we can see a trend where fewer people seem to ride bikes in winter, spring and fall but it picks up for summer. This makes sense because I don’t foresee many people wanting to go out when it is raining in NYC.

All in all, this data passes the gut check and so we will look at it from a few more perspectives before joining the data.

What is the distribution of rides on an hourly basis?

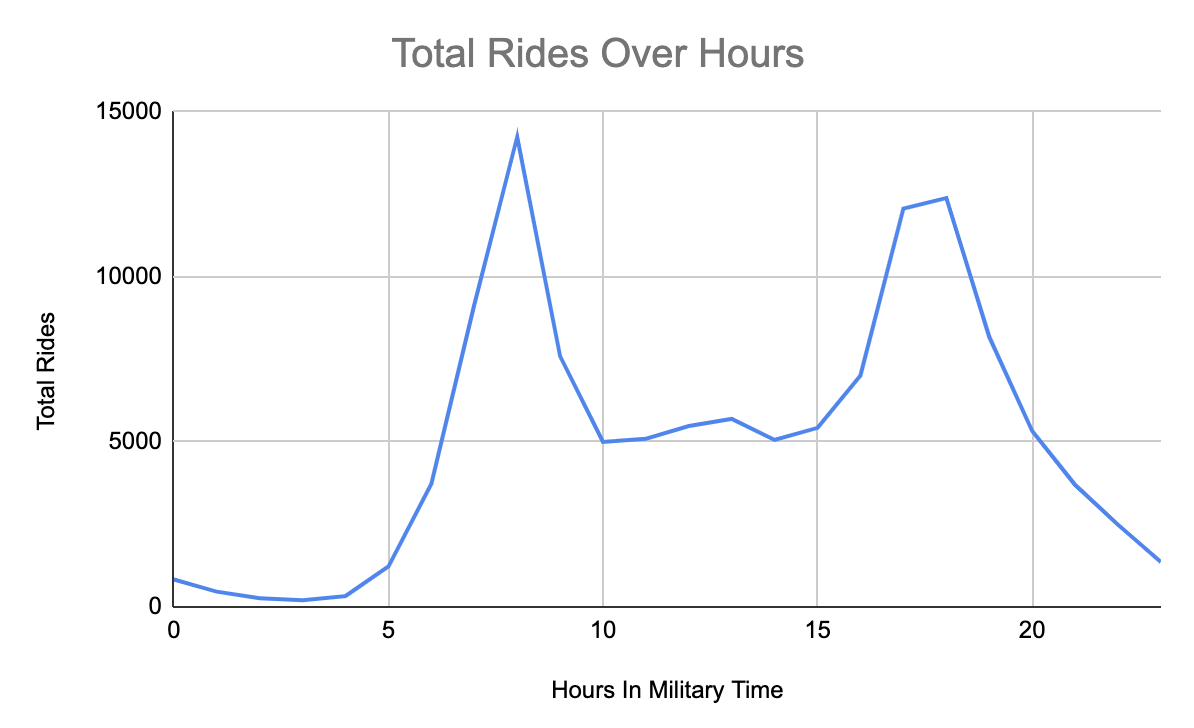

Our next question is asking what is the distribution of rides on an hourly basis.

To answer this question, we need to extract the hour from the start time. This requires the EXTRACT function in SQL. Using that hour you can then average it regardless of the specific date. Our goal is to see the distribution of bike rides.

We aren’t going to go through every step we took from a query perspective but you can look at the query and the chart below.

Embedded content: https://gist.github.com/bAcheron/d505989ce3e9bc756fcf58f8e760117b

As you can see there is clearly a trend of when people will ride bikes. Specifically there are surges in the morning and then again at night. This can be useful when it comes to knowing when it might be a good time to do maintenance or when bike racks are likely to run out.

But perhaps there are other patterns underlying this specific distribution.

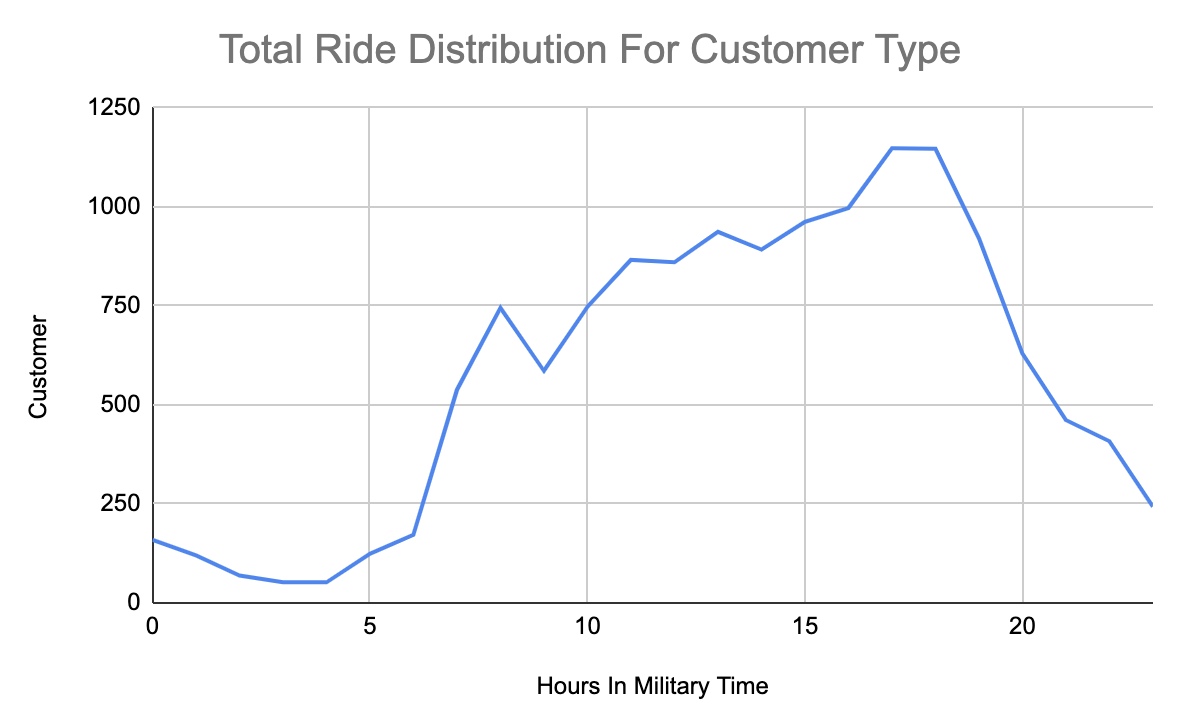

What time do different riders use bikes?

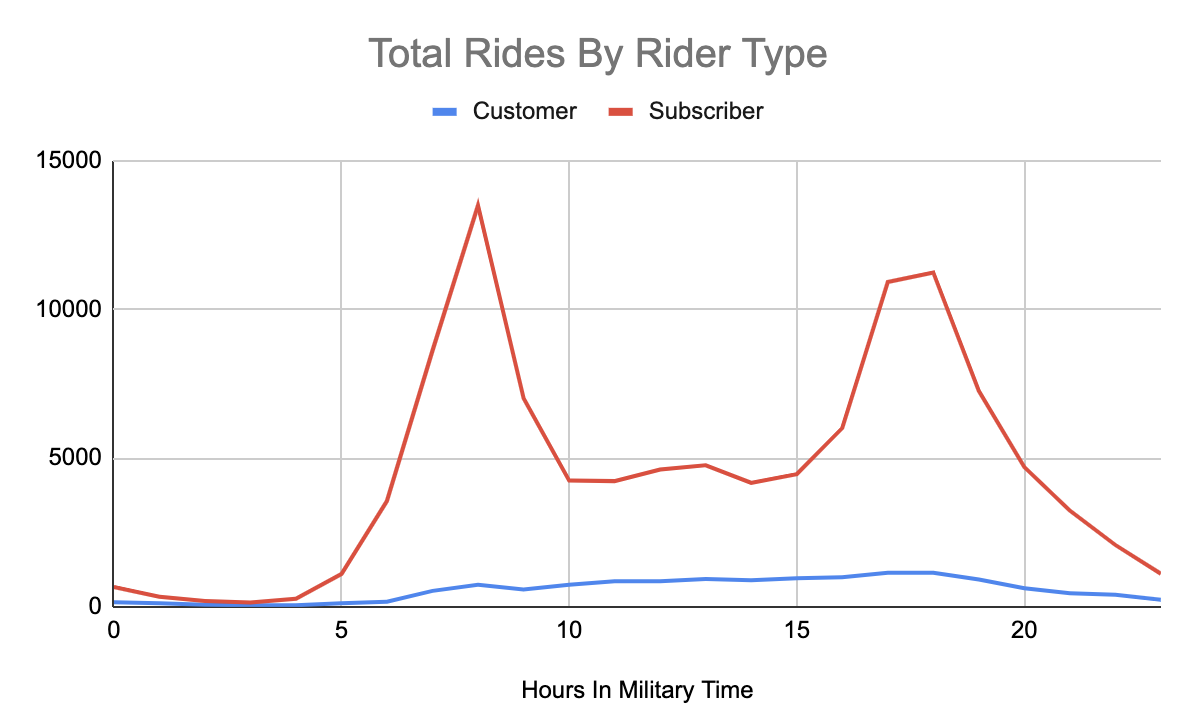

Continuing on this thought, we also wanted to see if there were specific trends per rider types. This data set has 2 rider types: 3-day customer passes and annual subscriptions.

So we kept the hour extract and added in the ride type field.

Looking below at the chart we can see that the trend for hours seems to be driven by the subscriber customer type.

However, if we examine the customer rider type we actually have a very different rider type. Instead of having two main peaks there is a slow rising peak throughout the day that peaks around 17:00 to 18:00 (5–6 PM).

It would be interesting to dig into the why here. Is it because people who purchase a 3-day pass are using it last minute, or perhaps they are using it from a specific area. Does this trend look constant day over day?

Joining Data Sets Across S3 and DynamoDB

Finally, let’s join in data from DynamoDB to get updates about the bike stations.

One reason we might want to do this is to figure out which stations have 0 bikes left frequently and also have a high amount of traffic. This could be limiting riders from being able to get a bike because when they go for a bike it is not there. This would negatively impact subscribers who might expect a bike to exist.

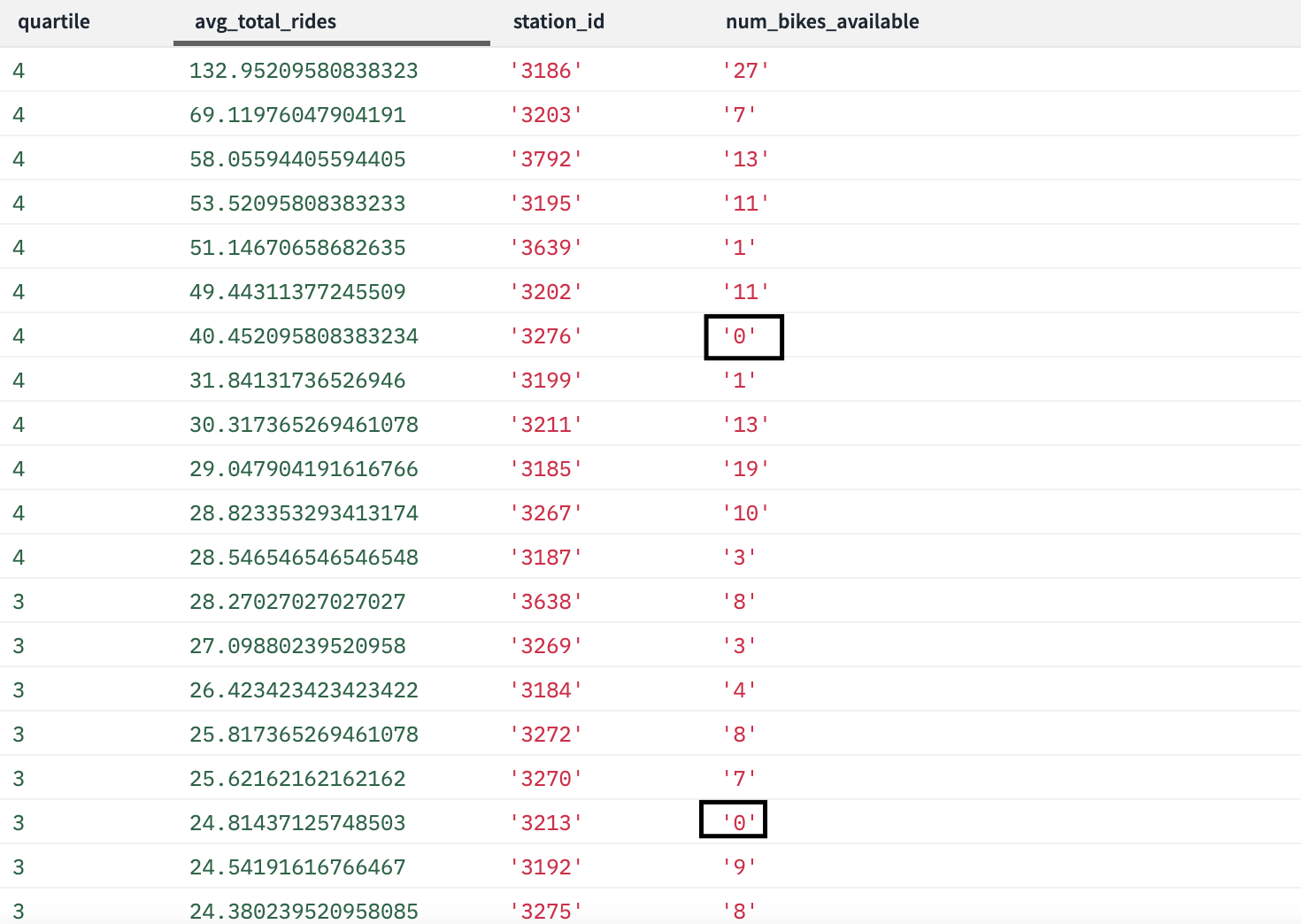

Below is a query that looks at the average rides per day per start station. We also added in a quartile just so we can look into the upper quartiles for average rides to see if there are any empty stations.

Embedded content: https://gist.github.com/bAcheron/28b1c572aaa2da31e43044a743e7b1f3

We listed out the output below and as you can see there are 2 stations currently empty that have high bike usage in comparison to the other stations. We would recommend tracking this over the course of a few weeks to see if this is a common occurrence. If it was, then Citi Bike might want to consider adding more stations or figuring out a way to reposition bikes to ensure customers always have rides.

As operations analysts, being able to track which high usage stations are low on bikes live can provide the ability to better coordinate teams that might be helping to redistribute bikes around town.

Rockset’s ability to read data from an application database such as DynamoDB live can provide direct access to the data without any form of data warehouse. This avoids waiting for a daily pipeline to populate data. Instead, you can just read this data live.

Live, Ad-Hoc Analysis for Better Operations

Whether you are a data scientist or data analyst, the need to wait on data engineers and software developers to create data pipelines can slow down ad-hoc analysis. Especially as more and more data storage systems are created it just further complicates the work of everyone who manages data.

Thus, being able to easily access, join and analyze data that isn’t in a traditional data warehouse can prove to be very helpful and they can lead quick insights like the one about empty bike stations.

Ben has spent his career focused on all forms of data. He has focused on developing algorithms to detect fraud, reduce patient readmission and redesign insurance provider policy to help reduce the overall cost of healthcare. He has also helped develop analytics for marketing and IT operations in order to optimize limited resources such as employees and budget. Ben privately consults on data science and engineering problems. He has experience both working hands-on with technical problems as well as helping leadership teams develop strategies to maximize their data.

Photo by ZACHARY STAINES on Unsplash