Case Study: StoryFire - Scaling a Social Video Platform on MongoDB and Rockset

July 14, 2020

StoryFire is a social platform for content creators to share and monetize their stories and videos. Using Rockset to index data from their transactional MongoDB system, StoryFire powers complex aggregation and join queries for their social and leaderboard features.

By moving read-intensive services off MongoDB to Rockset, StoryFire is able to solve two hard challenges: performance and scale. The performance requirement is to serve low-latency queries so that front-end applications feel snappy and responsive. The scaling challenge introduces requirements for high concurrency, where serving increased Queries Per Second (QPS) is critical.

In this case study, we explore how StoryFire has simplified and scaled their real-time application architecture to future proof for huge growth in user activity. We explore one particular query “hot spot” and show how Rockset can be used to offload computationally expensive queries for unpredictable workloads.

User Growth Brings Performance Challenges

Offering greater support for content creators and increased opportunity for monetization, StoryFire is enjoying significant growth in user activity as users migrate from other platforms to grow their follower activity. These influencer migrations lead to significant spikes in site activity where concurrency becomes important as well as maintaining a responsive application.

The StoryFire experience is implicitly real time and data driven in that users expect to-the-second accuracy, across all devices. One of these key features is for a user to be able to see how many of their Stories have been viewed over the last 90 days; a not uncommon metric for any similar analytics user dashboard. Query complexity wise, this is relatively simple (with SQL JOINs) but high concurrency in conjunction with low latency is the challenge.

Identified as being a potential hot spot for performance degradation as platform usage increases, the execution time can vary depending upon the activity of the user. As a result, this type of query is ideal to offload from MongoDB, the primary transactional database, to Rockset, where it can be scaled independently and without potentially starving resources from other critical processes.

Rockset as a Speed Layer for MongoDB

Rockset can be thought of as a fully managed, click-and-connect “speed layer” for serving and scaling any data set. Commonly, when Rockset is introduced, many aspects of the overall architecture can be simplified; be it reducing or eliminating ETL pipelines for transformations and denormalization, as well as an overall reduction in complexity due to zero setup, administration and performance tuning.

MongoDB for Transactions

StoryFire selected MongoDB hosted on the MongoDB Atlas cloud as their primary transactional database, enjoying the benefits of both a scalable NoSQL document store along with the consistency required for their transactional needs. Using MongoDB Atlas allows StoryFire to use MongoDB as a cloud service, without the need to build and self-manage their own cluster.

Rockset Integration

As noted, Rockset connects to other data sources and automatically keeps the data synchronized in real time. In the case of MongoDB, Rockset connects to the Change Data Capture (CDC) stream from MongoDB Atlas. This is a zero-code integration and can be completed in a few minutes.

Once the initial connection has been made, Rockset will examine the data sizes within Mongo and automatically ramp up ingest resources for the initial “bulk load.” Once complete, Rockset will then scale the ingest resources back down and continue consuming any ongoing changes. One of the key architectural benefits here is that Rockset collections can be synchronized with MongoDB collections individually and hence only the data needed for the use case need be synchronized. This aligns well with a microservices architecture.

Application Integration

Rockset allows users to save, version and publish SQL queries via HTTP so that these resources can be rapidly implemented in front-end applications and accessed by any programming language that supports HTTP. These RESTful resources are called Query Lambdas. Query Lambdas also allow parameters to be passed at request time. In this example, the StoryFire user interface lets users look back over 30, 60 and 90 days, as well as of course the query needs to be specific for an individual hostID. These are ideal candidates for parameters. You can read more about Query Lambdas here.

Virtual Instances

The final feature of note is the ability to scale Rockset’s compute resources, without downtime within a minute or two. We term the compute resources allocated to an account virtual instances which consist of a set number of vCPUs and associated memory. With changing instance types being a zero-downtime operation, its very easy for customers like StoryFire to set a cost/performance ratio they are happy with and likewise, adjust based on changing needs.

Constructing Queries on User Activity

StoryFire data is organized into several collections. The User collection defines all the users and their ids. The Event collection captures every new story published and the EventViews collection records a new entry every time a user views a story.

The query in question involves a JOIN between two collections: Events and EventViews where an Event can have many EventViews. As with many other analytical workloads, the goal here is to aggregate some metric across a particular subset of records and view the trend over time.

SELECT

SUM(v."count"),

DATE(v.timestamp) AS day,

FROM

EventViews v

INNER JOIN Events s ON v.fbId = s.fbId

WHERE

s.hostID = '[user specific id]'

AND

s.hasVideo = true

AND v.timestamp > CURRENT_TIMESTAMP() - DAYS(90)

group by

day

order by

day DESC;

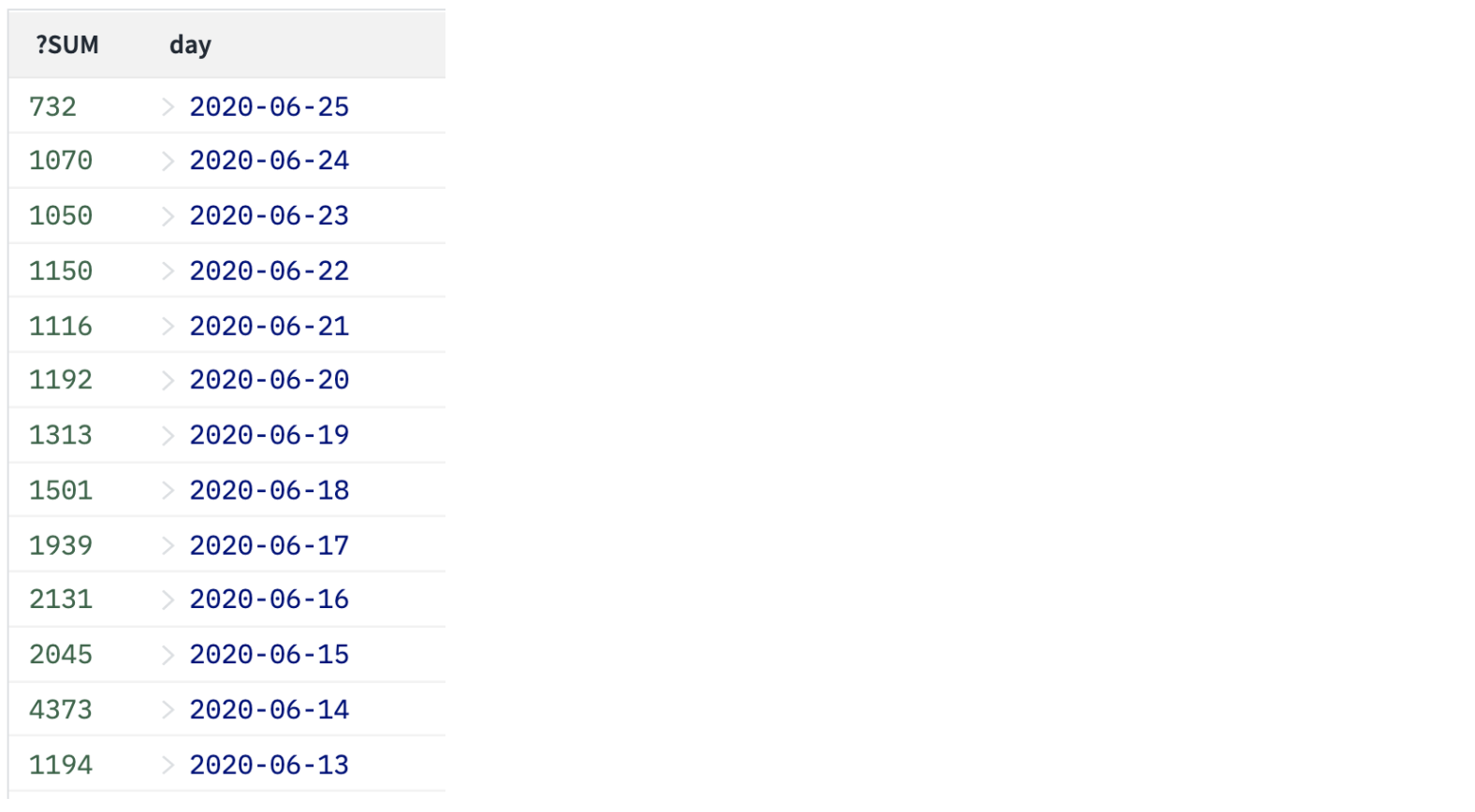

This yields a result set like the following:

Rockset automatically generates Row, Column, and Inverted indexes, and based on the particular predicates in question, the optimizer takes the most efficient path of execution. For example if the hostId predicate matched many millions of rows the column index would be selected because it is highly optimized for large range scans. However if only a small fraction of the rows matched the predicate, we could use the inverted index to quickly identify those rows in a matter of milliseconds. This automated indexing reduces the operational burden that DBAs typically shoulder maintaining indexes, and it allows developers and analysts to write SQL without worrying about slow, unindexed queries wasting their time or stalling their applications.

Solving for Performance and Scale

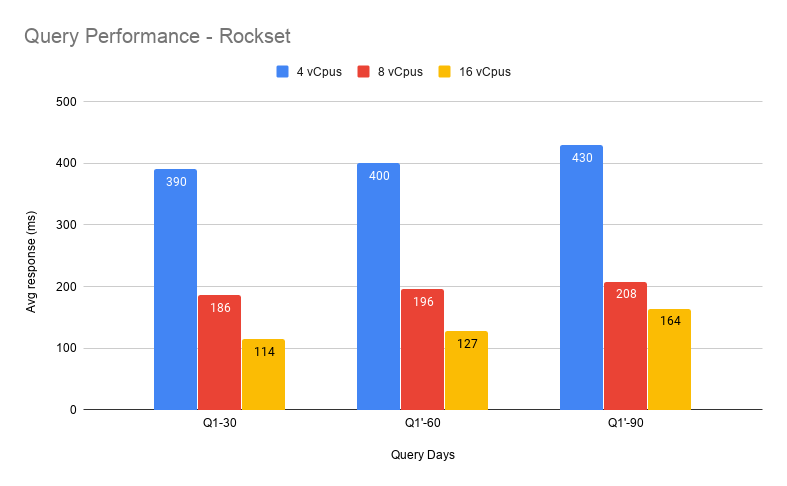

The SQL query was tested for Rockset and the historical days value was tested at 30, 60 and 90.

We can see here that as the range of data to be queried increases (number of days), the Rockset performance stays roughly similar. While response time for this query goes up in proportion to data size when querying MongoDB directly, Rockset’s query response time does not increase materially even when we go from 30 to 90 days of data. This demonstrates the power and efficiency of the Converged Indexes along with the query optimizer. It is worth noting that in the test query, a user ID was used that had several hundred join IDs and hence was relatively expensive to run. The same query for users with lower data volumes will execute in double digit ms range.

Overall, the results demonstrate the scaling capability of Rockset. As the compute is increased, the performance increases proportionally. Given this is a zero downtime and fast operation, it is easy to scale up and down as needed.

From an architectural perspective, an expensive query was moved on to Rockset where it can take advantage of massive parallel execution as well as offering the ability to scale up and down compute resources as needed. Reducing the complex read burden from a transactional system like Mongo allows performance to remain consistent for the core transactional workloads.

We are excited to partner with StoryFire on their scaling journey.

Other MongoDB resources: