JOINs and Aggregations Using Real-Time Indexing on MongoDB Atlas

June 16, 2020

MongoDB.live took place last week, and Rockset had the opportunity to participate alongside members of the MongoDB community and share about our work to make MongoDB data accessible via real-time external indexing. In our session, we discussed the need for modern data-driven applications to perform real-time aggregations and joins, and how Rockset uses MongoDB change streams and Converged Indexing to deliver fast queries on data from MongoDB.

Data-Driven Applications Need Real-Time Aggregations and Joins

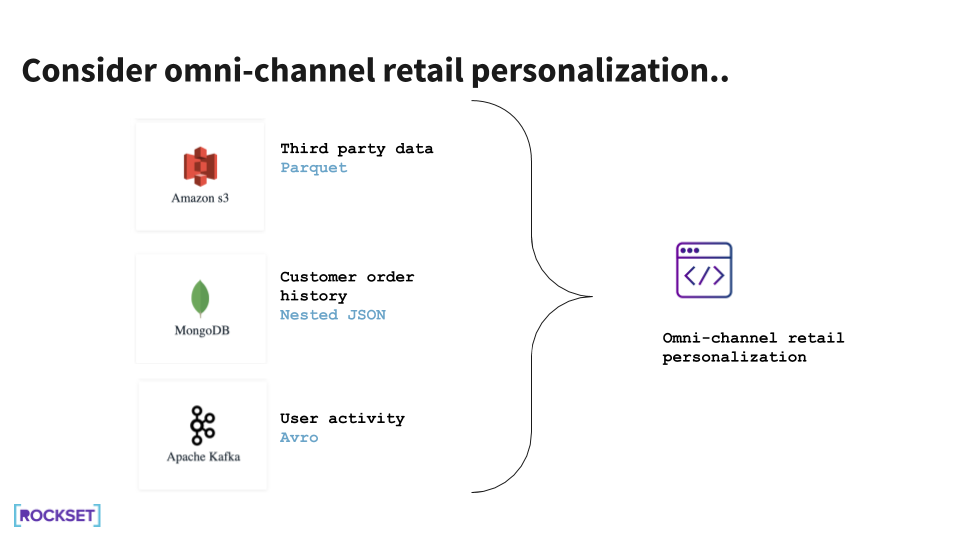

Developers of data-driven applications face many challenges. Applications of today often operate on data from multiple sources—databases like MongoDB, streaming platforms, and data lakes. And the data volumes these applications need to analyze typically scale into multiple terabytes. Above all, applications need fast queries on live data to personalize user experiences, provide real-time customer 360s, or detect anomalous situations, as the case may be.

An omni-channel retail personalization application, as an example, may require order data from MongoDB, user activity streams from Kafka, and third-party data from a data lake. The application will have to determine what product recommendation or offer to deliver to customers in real time, while they are on the website.

Real-Time Architecture Today

One of two options is typically used to support these real-time data-driven applications today.

- We can continuously ETL all new data from multiple data sources, such as MongoDB, Kafka, and Amazon S3, into another system, like PostgreSQL, that can support aggregations and joins. However, it takes time and effort to build and maintain the ETL pipelines. Not only would we have to update our pipelines regularly to handle new data sets or changed schemas, the pipelines would add latency such that the data would be stale by the time it could be queried in the second system.

- We can load new data from other data sources—Kafka and Amazon S3—into our production MongoDB instance and run our queries there. We would be responsible for building and maintaining pipelines from these sources to MongoDB. This solution works well at smaller scale, but scaling data, queries, and performance can prove difficult. This would require managing multiple indexes in MongoDB and writing application-side logic to support complex queries like joins.

A Real-Time External Indexing Approach

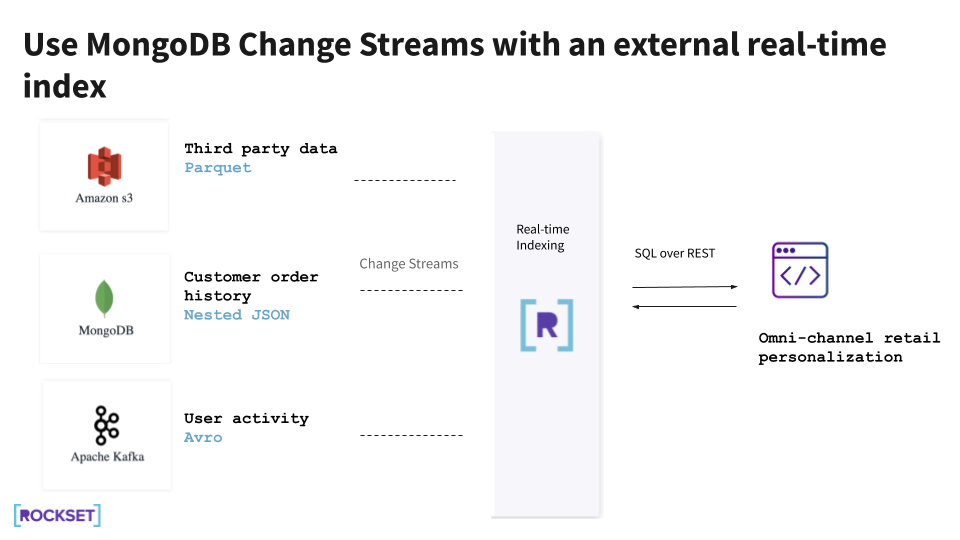

We can take a different approach to meeting the requirements of data-driven applications.

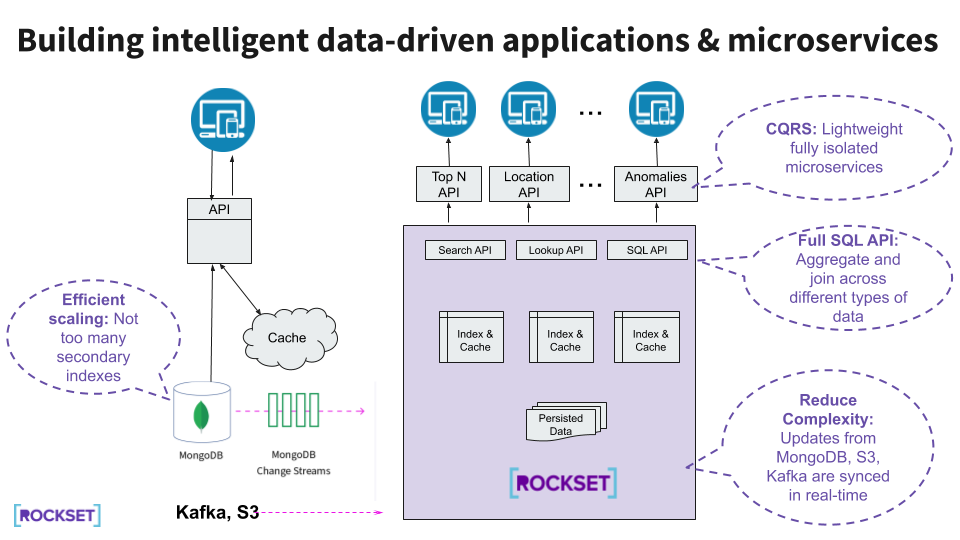

Using Rockset for real-time indexing allows us to create APIs simply using SQL for search, aggregations, and joins. This means no extra application-side logic is required to support complex queries. Instead of creating and managing our own indexes, Rockset automatically builds indexes on ingested data. And Rockset ingests data without requiring a pre-defined schema, so we can skip ETL pipelines and query the latest data.

Rockset provides built-in connectors to MongoDB and other common data sources, so we don’t have to build our own. For MongoDB Atlas, the Rockset connector uses MongoDB change streams to continuously sync from MongoDB without affecting production MongoDB.

In this architecture, there is no need to modify MongoDB to support data-driven applications, as all the heavy reads from the applications are offloaded to Rockset. Using full-featured SQL, we can build different types of microservices on top of Rockset, such that they are isolated from the production MongoDB workload.

How Rockset Does Real-Time Indexing

Rockset was designed to be a fast indexing layer, synced to a primary database. Several aspects of Rockset make it well-suited for this role.

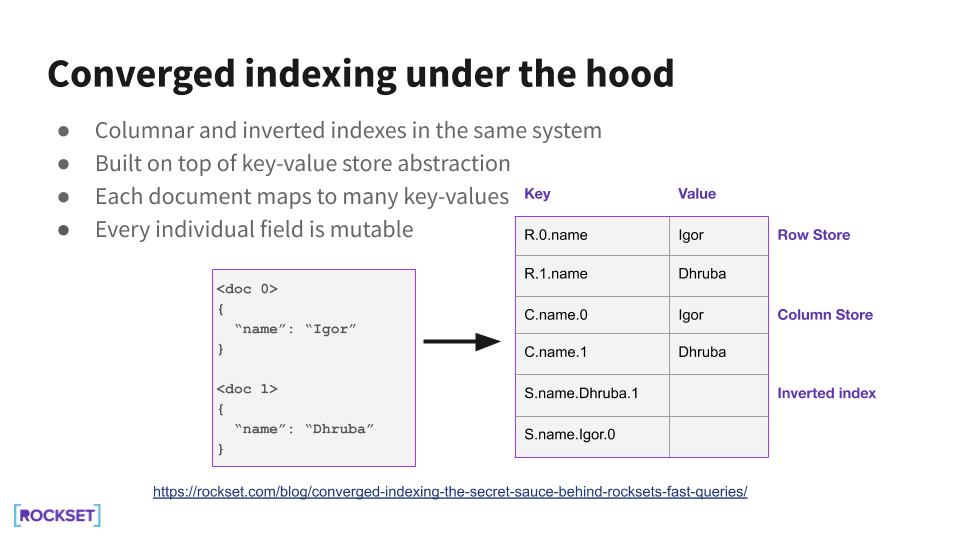

Converged Indexing

Rockset's Converged Index™ is a Rockset-specific feature in which all fields are indexed automatically. There is no need to create and maintain indexes or worry about which fields to index. Rockset indexes every single field, including nested fields. Rockset's Converged Index is the most efficient way to organize your data and enables queries to be available almost instantly and perform incredibly fast.

Rockset stores every field of every document in an inverted index (like Elasticsearch does), a column-based index (like many data warehouses do), and in a row-based index (like MongoDB or PostgreSQL). Each index is optimized for different types of queries.

Rockset is able to index everything efficiently by shredding documents into key-value pairs, storing them in RocksDB, a key-value store. Unlike other indexing solutions, like Elasticsearch, each field is mutable, meaning new fields can be added or individual fields updated without having to reindex the entire document.

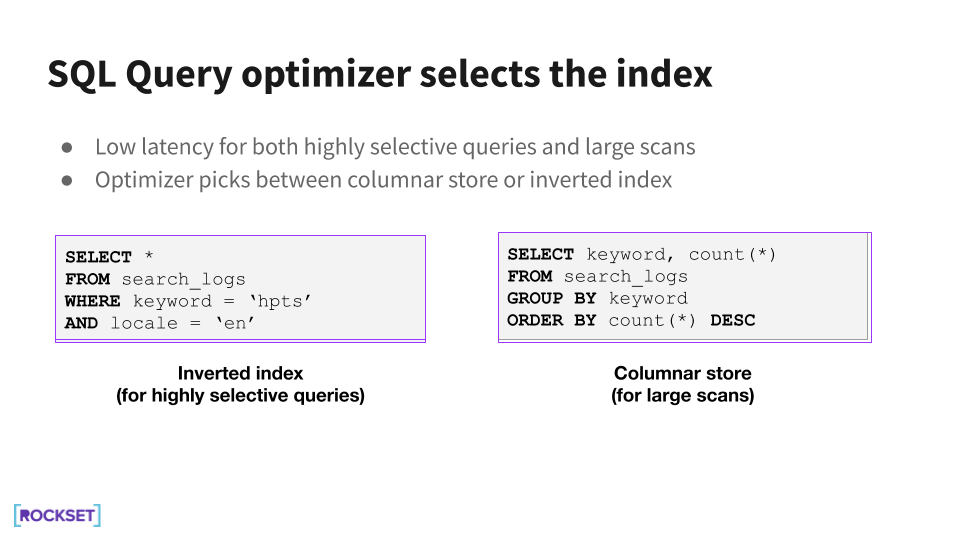

The inverted index helps for point lookups, while the column-based index makes it easy to scan through column values for aggregations. The query optimizer is able to select the most appropriate indexes to use when scheduling the query execution.

Schemaless Ingest

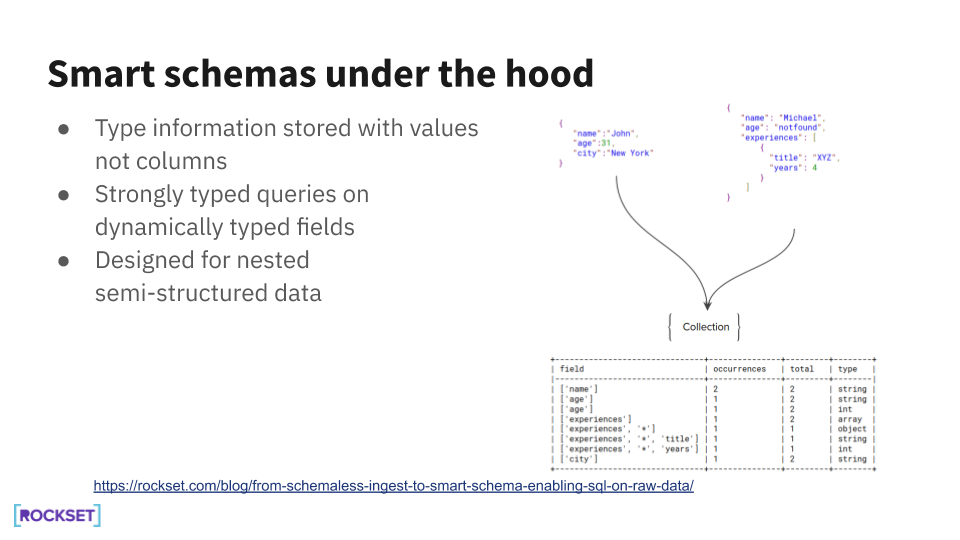

Another key requirement for real-time indexing is the ability to ingest data without a pre-defined schema. This makes it possible to avoid ETL processing steps when indexing data from MongoDB, which similarly has a flexible schema.

However, schemaless ingest alone is not particularly useful if we are not able to query the data being ingested. To solve this, Rockset automatically creates a schema on the ingested data so that it can be queried using SQL, a concept termed Smart Schema. In this manner, Rockset enables SQL queries to be run on NoSQL data, from MongoDB, data lakes, or data streams.

Disaggregated Aggregator-Leaf-Tailer Architecture

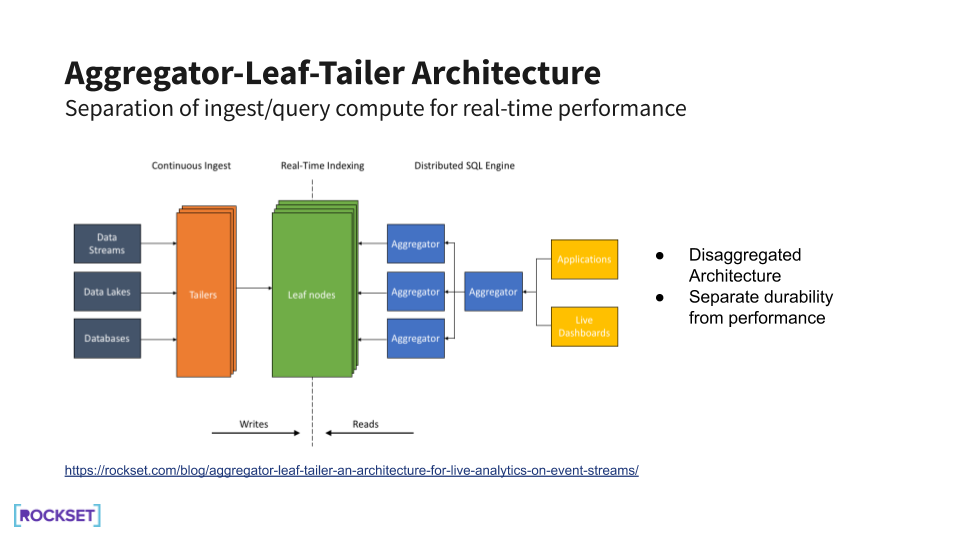

For real-time indexing, it is essential to deliver real-time performance for ingest and query. To do so, Rockset uses a disaggregated Aggregator-Leaf-Tailer architecture that takes advantage of cloud elasticity.

Tailers ingest data continuously, leaves index and store the indexed data, and aggregators serve queries on the data. Each component of this architecture is decoupled from the others. Practically, this means that compute and storage can be scaled independently, depending on whether the application workload is compute- or storage-biased.

Further, within the compute portion, ingest compute can be separately scaled from query compute. On a bulk load, we can spin up more tailers to minimize the time required to ingest. Similarly, during spikes in application activity, we can spin up more aggregators to handle a higher rate of queries. Rockset is then able to make full use of cloud efficiencies to minimize latencies in the system.

Using MongoDB and Rockset Together

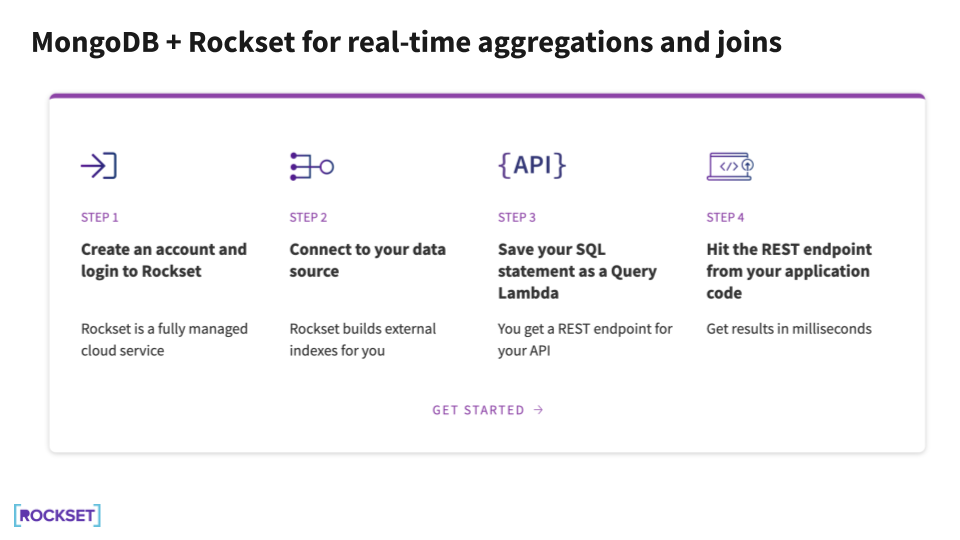

MongoDB and Rockset recently partnered to deliver a fully managed connector between MongoDB Atlas and Rockset. Using the two services together brings several benefits to users:

- Use any data in real time with schemaless ingest - Index continuously from MongoDB, other databases, data streams, and data lakes with build-in connectors.

- Create APIs in minutes using SQL - Create APIs using SQL for complex queries, like search, aggregations, and joins.

- Scale better by offloading heavy reads to a speed layer - Scale to millions of fast API calls without impacting production MongoDB performance.

Putting MongoDB and Rockset together takes a few simple steps. We recorded a step-by-step walkthrough here to show how it’s done. You can also check out our full MongoDB.live session here.

Ready to get started? Create your Rockset account now!

Other MongoDB resources: