Create APIs for Aggregations and Joins on MongoDB in Under 15 Minutes

May 19, 2020

Rockset has teamed up with MongoDB so you can build real-time apps with data across MongoDB and other sources. If you haven’t heard of Rockset or know what Rockset does, you will by the end of this guide! We’ll create an API to determine air quality using ClimaCell data on the weather and air pollutants.

Air quality has been documented to effect human health (resources at the bottom). In particular, levels of particulate matter (PM), sulfur dioxide (SO2), nitrogen dioxide (NO2), the ozone molecule (O3), and carbon monoxide (CO) are measured in order to suggest a person’s recommended activity level outdoors. What effects PM, SO2, NO2, O3, and CO levels are subject to extensive study: scientists examine temperature, humidity, traffic congestion, wind gust, and so on to see how the air quality index (AQI) changes with respect to these pollutants.

It’s important to note that this is a sample app to show how MongoDB can integrate with Rockset and demo Rockset’s super powers of building APIs. This is not a scientific project that is meant to be exhaustive in its conclusion. Much more data is needed, and many variables are not taken into consideration when doing the query. For more on air pollution, see below on resources. To leverage Rockset and MongoDB for your real-time applications (scientific or not), continue reading!

In subsequent tutorials, I can show how this dataset can potentially be used to understand how temperature and humidity affects AQI of PM and O3. You’re also welcome to do the challenges at the end of the tutorial to earn Rockset credit, swag, and more!

In this guide, we’re going to:

-

Integrate MongoDB Atlas and Rockset

- Build collections on MongoDB that will each map to Rockset’s collections

- Build a Python script that will continuously get the weather and air quality data from ClimaCell (Powered by ClimaCell) and put it into MongoDB

- Use Rockset’s Query Editor to write a query on real-time data coming in from MongoDB

- Create an API to the query we wrote

- Execute the API in our app and print out a statement about the air quality

Setup

- Make sure your OS is on the latest software

-

Have Python 3.7 or later

- I also use a virtualenv for my projects

- Install Rocket’s Python Client

- Install MongoDB’s Python client, pymongo

- Create (if you haven’t) a MongoDB, Rockset, and ClimaCell developer account

- Check out this video for a quick overview

Let’s get started on MongoDB

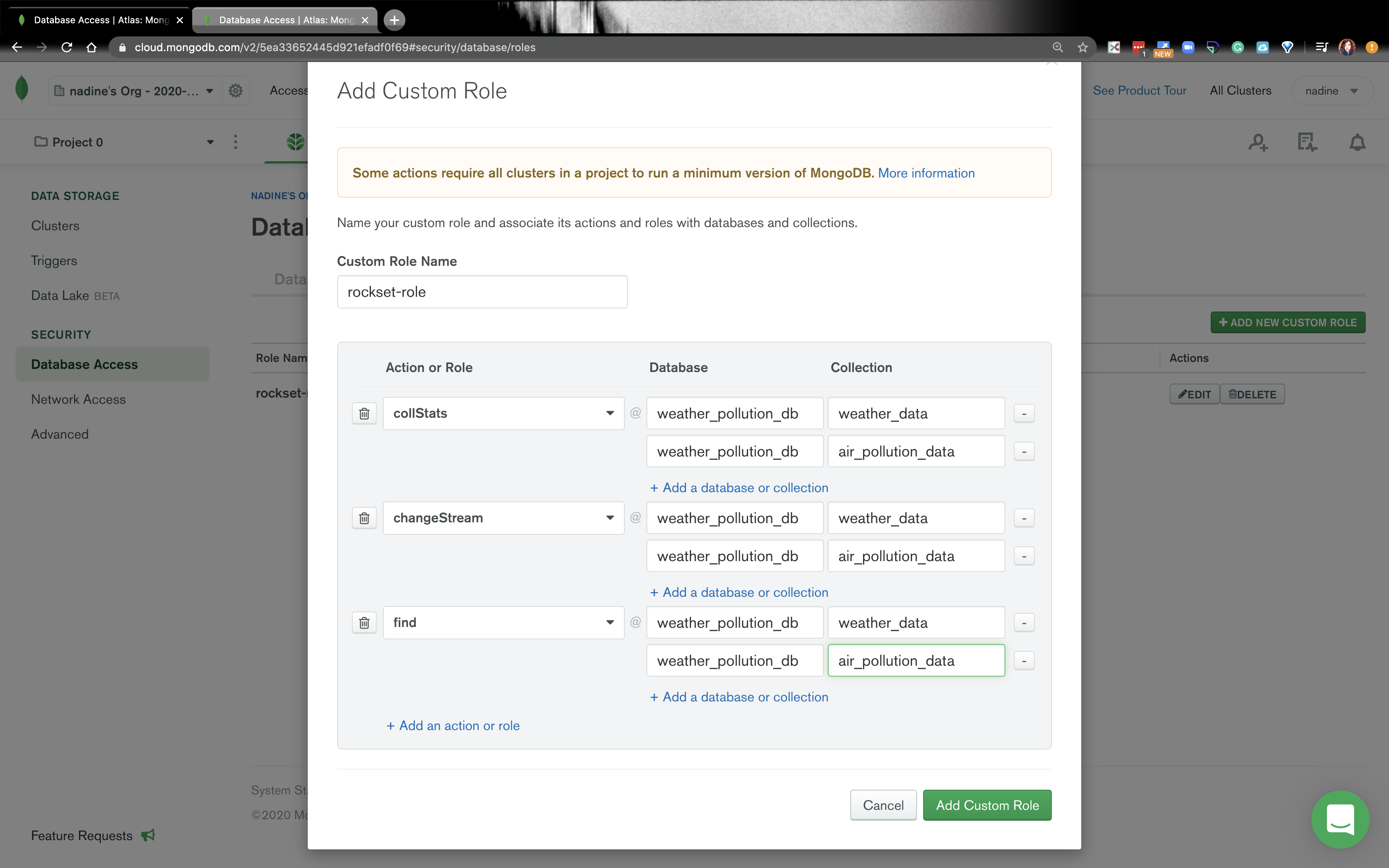

- After you’ve created an account on MongoDB Atlas, go ahead and navigate to Database Access → Custom Roles and click on Add New Custom Role. The image below is what actions and roles should be added for rockset-role. Rockset has secure read-only access to MongoDB Atlas.

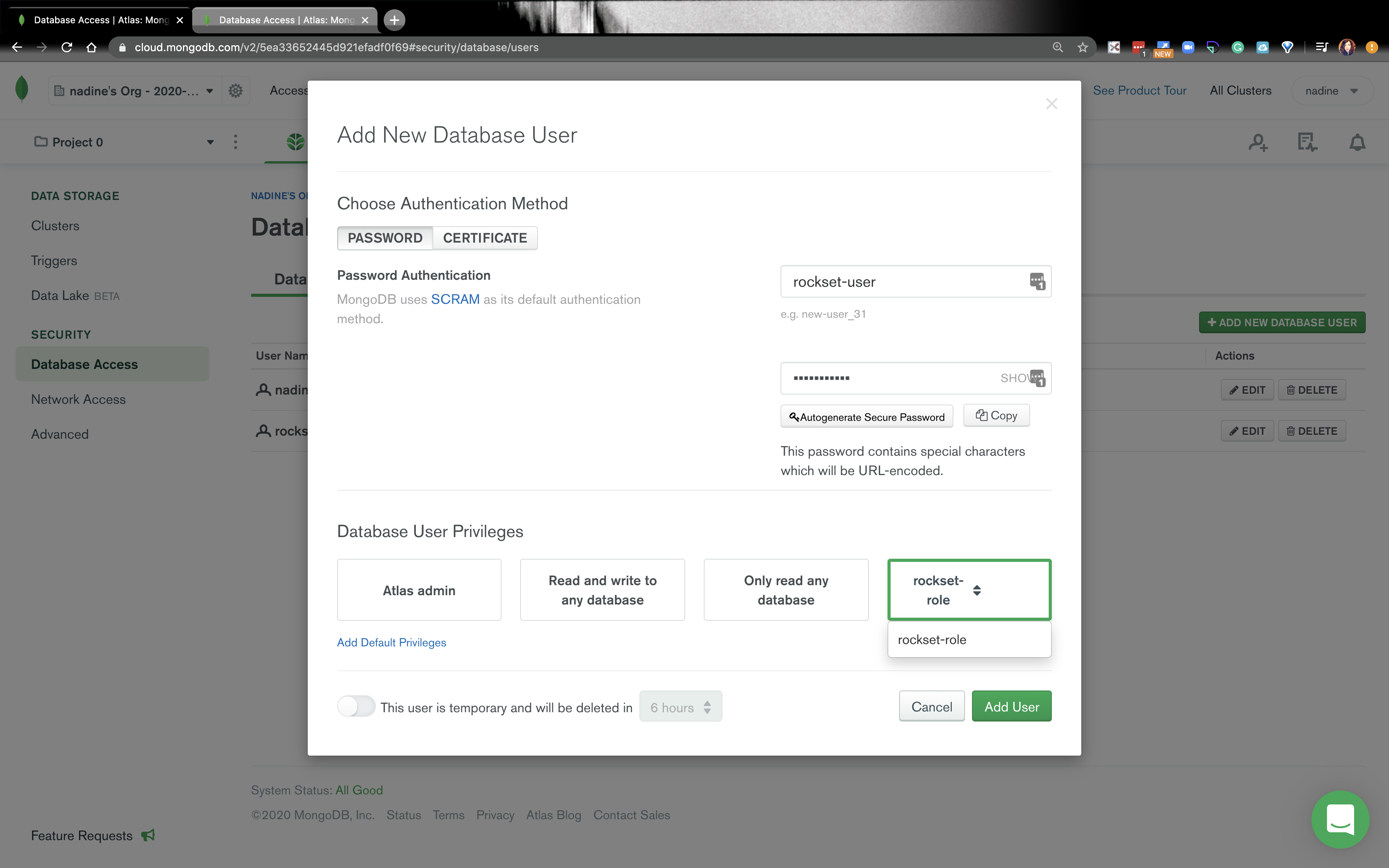

- Navigate to the Database Users tab and click on Add New Database User. Remember the password here, because we will need to use it again, when we create an integration on Rockset.

- Go ahead and create another database user that has Atlas admin privileges. We will be using this database user in our Python app. You can name this user yourName-admin. Make sure you remember the password here, because we will use this in our Python app.

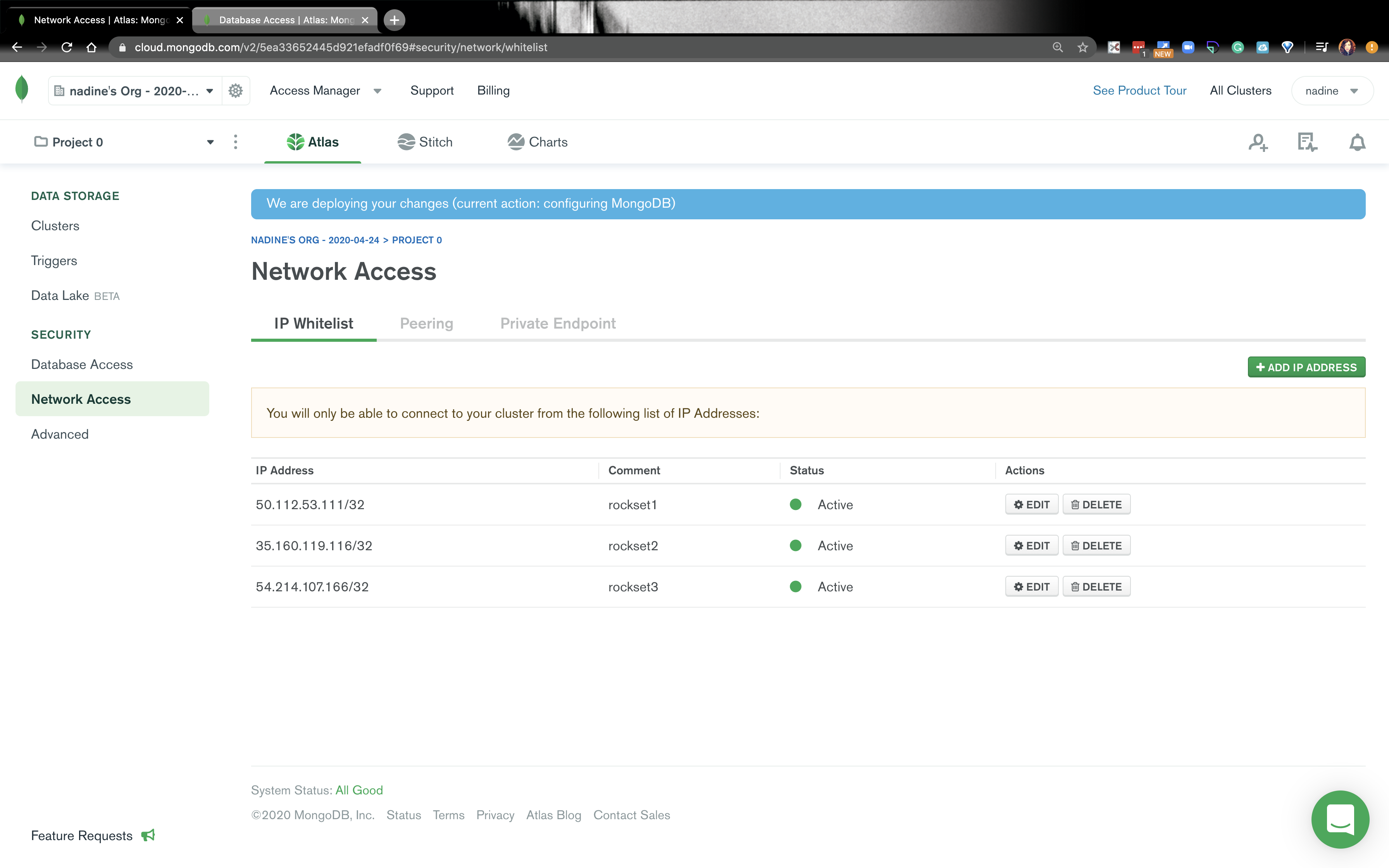

- Navigate to the Network Access click on Add IP Address and whitelist these IPs: Embedded content: https://gist.github.com/nfarah86/c6014ea1d60ec6113948d889afb16fdf

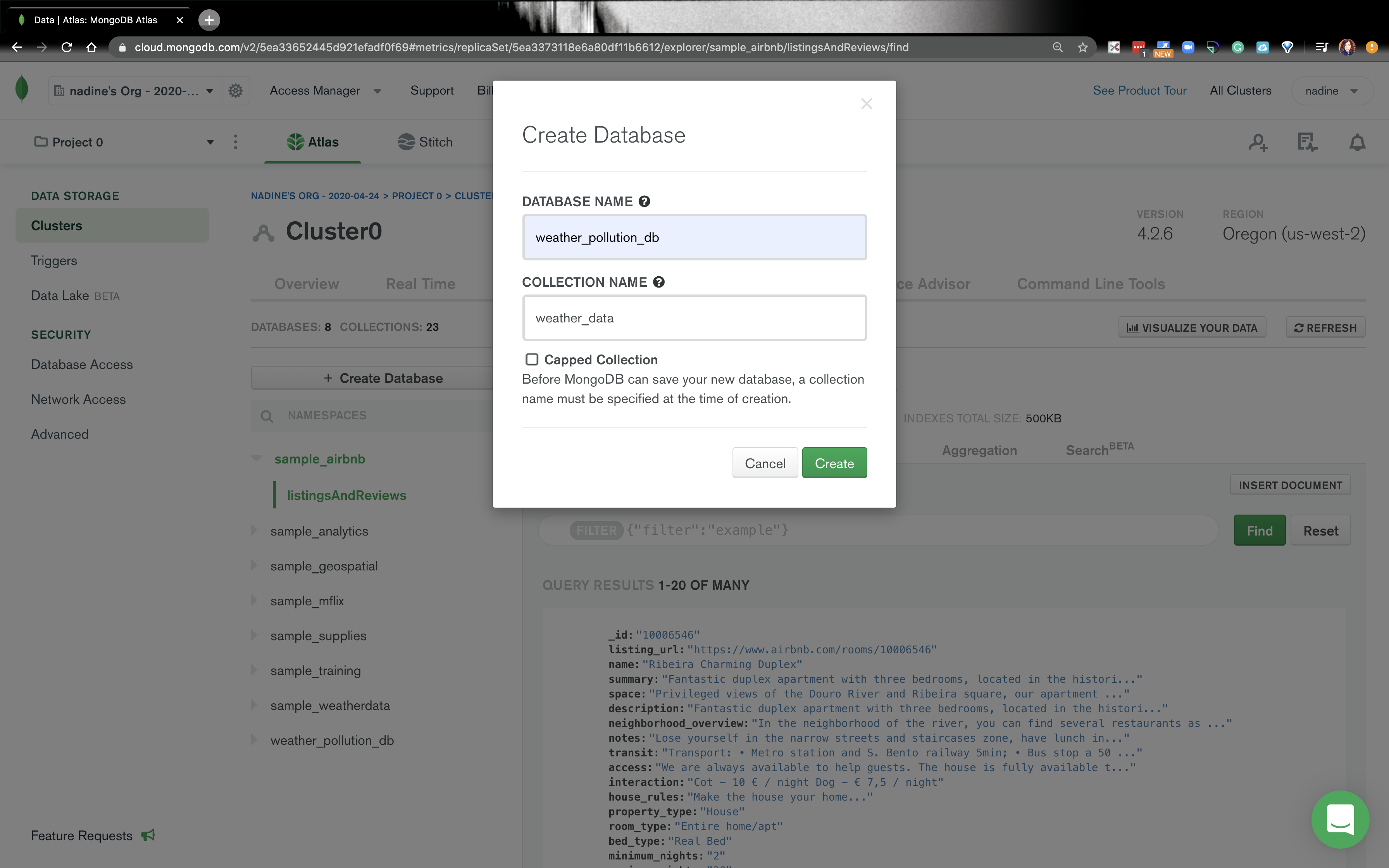

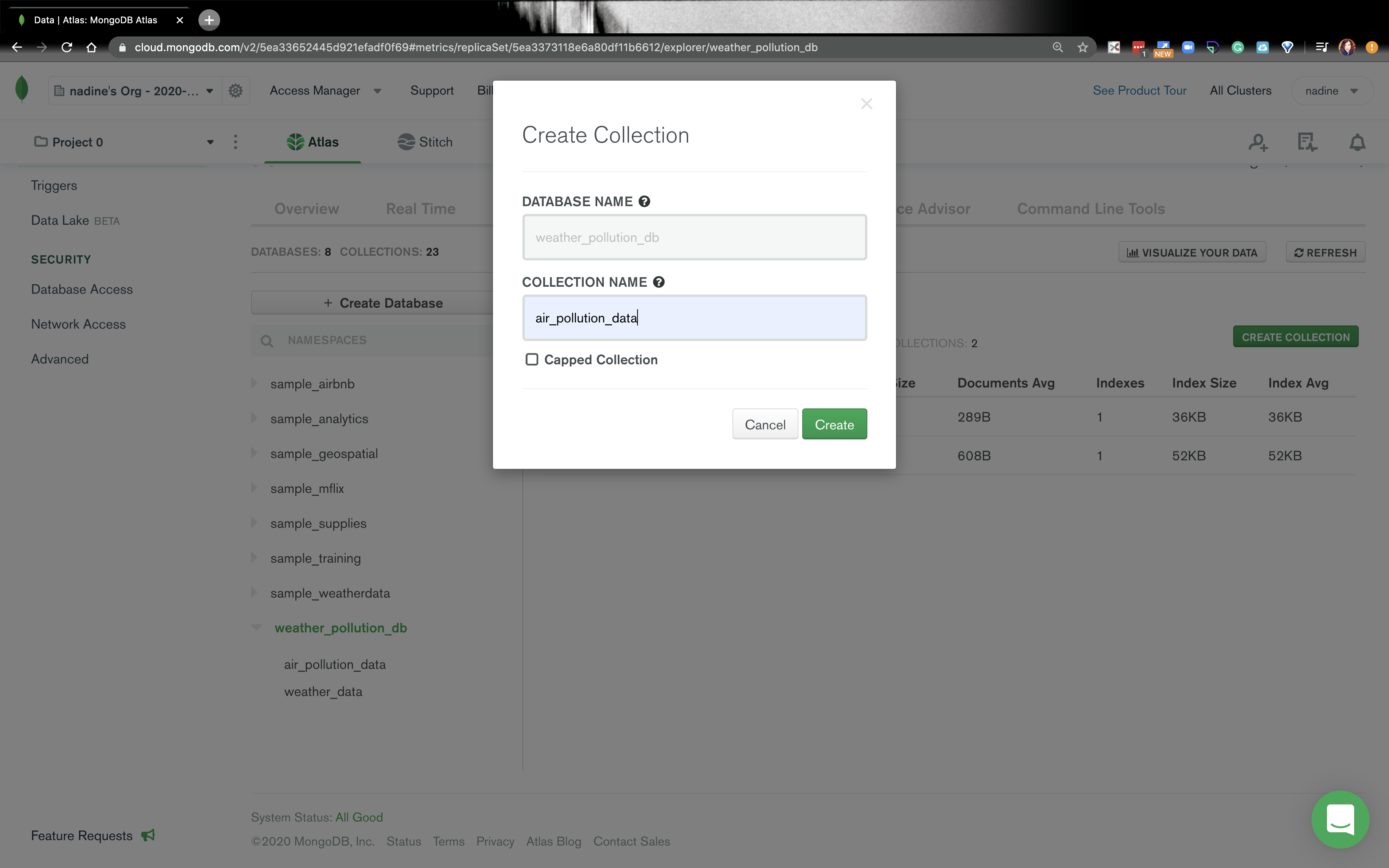

- Navigate to Clusters and click on Collections then click on Create database. We are going to create a

weather_pollution_dband it’s going to haveweather_datacollection.

- Under the

weather_pollution_db, there is going to be a plus sign. Click on the plus sign, and add another collection,air_pollution_data.

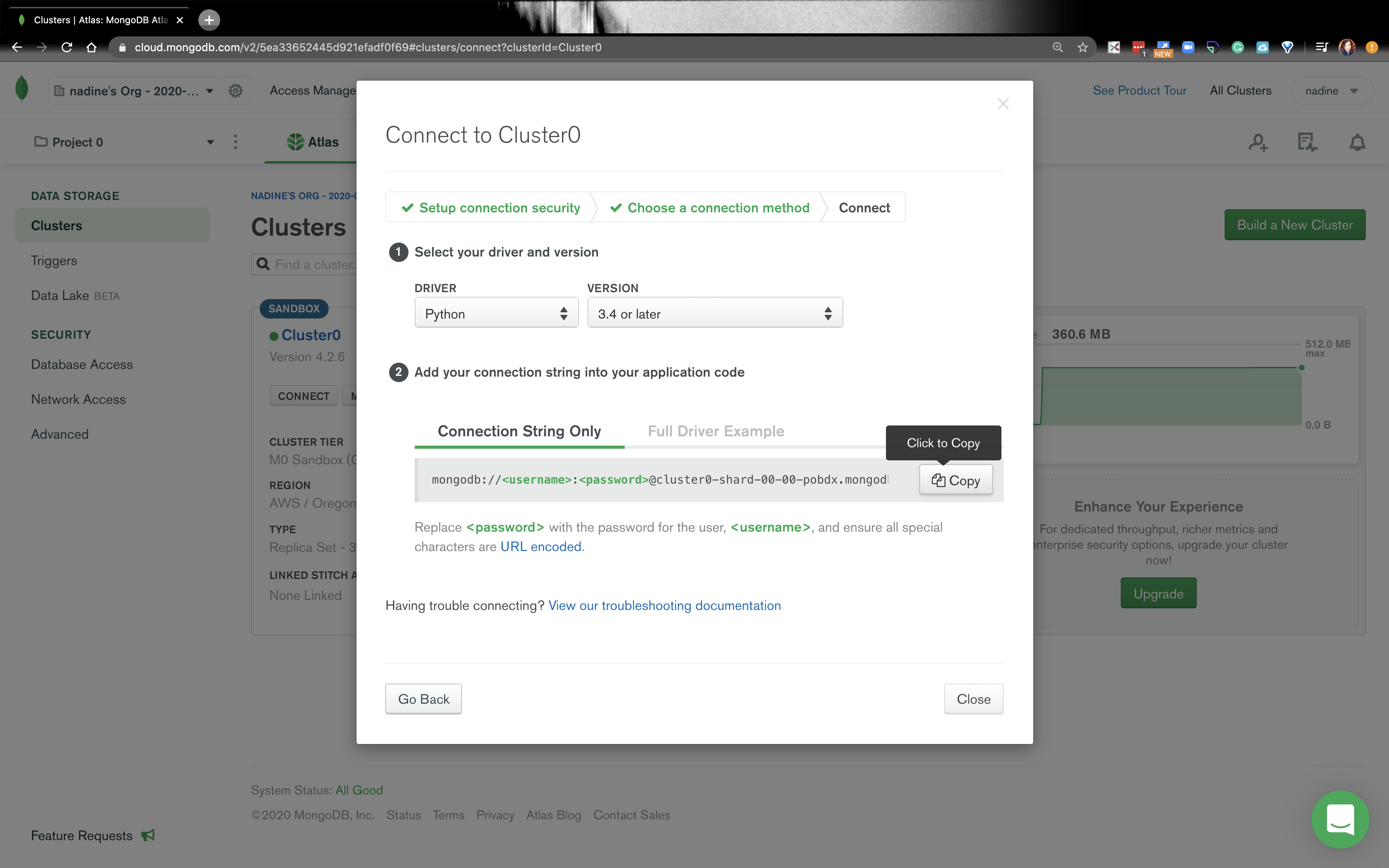

- Go back to Clusters and click on Connect and click on Connect your application. Copy the string, because we will use it in Rockset. When we are in our Rockset account, the username is

rockset-userand the password is the password you used when you createdrockset-user. In our Python app, the username will beyourName-adminand the password associated with that database user.

- That’s it for MongoDB! Let’s go ahead and write our Python app!

Let’s build our Python app

- Create a project folder, and in that project, create a file

.env. -

In the

.envfile add this:Mongo_URI=“<uri string>”- The

"<uri string>"is your connection string from MongoDB. Make sure you replace the username and password in the connection string withyourName-adminand the password you used when you created that database user. - It should look something like this:

mongodb://nadine-role-admin:password....

-

If you use a

virtualenvgo ahead activate a env for this project. Make sure you’re underPython 3.7or higher.- I personally use Pyenv, but feel free to use whatever you want!

-

Install

python-dotenv:$ pip install python-dotenv

-

Install [pymongo] and [dnspython]:

$ pip install pymongo$ pip install dnspython==1.16.0

- Inside our project folder, go ahead and create

settings.pyThis file should look like this: Embedded content: https://gist.github.com/nfarah86/f87a9d37f1f72bb2d4a73d9b73dc87b4. - Create another file in the project folder called

mongo_config.py. It should look like this: Embedded content: https://gist.github.com/nfarah86/1fc7bc9987d27edbec0fa9b32be95163 - In the project folder, go ahead and create file called

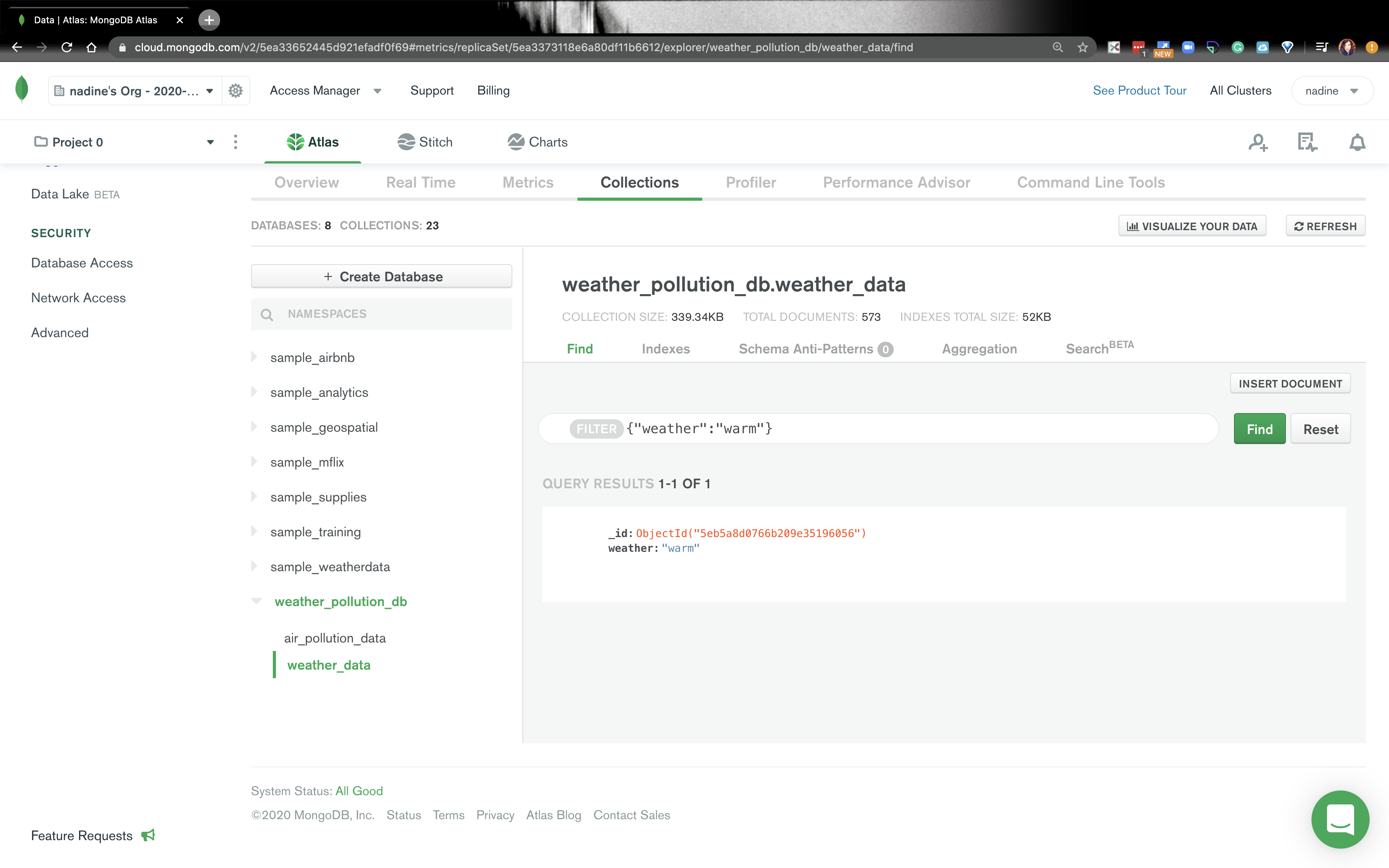

script.py. All we’re going to do is make sure our Python app is connecting to MongoDB: Embedded content: https://gist.github.com/nfarah86/4d8e87ff6e70e1da1c017e80b8daeef2 - Under Clusters, click on the collections button. Go to

weather_pollution_dband click onweather_data. You should see this:

-

Now that we know we can insert data into MongoDB, let’s go ahead and create a ClimaCell developer account and get an API KEY.

- Go ahead and put your API_KEY in the

.envfile: Embedded content: https://gist.github.com/nfarah86/118155308a4adae8a5ca037ffd99e7c4

- Go ahead and put your API_KEY in the

-

In

settings.pygo ahead and add this:CLIMACELL_API_KEY = os.environ.get('CLIMACELL_API_KEY')

- I chose ClimaCell because they offer realtime data for weather and air pollution. We’re going to work with this api. They have different parameters that can be added to the request. You can explore those here.

-

In our project folder go ahead and pip install a few libraries:

$ pip install requests$ pip install timeloop

-

In script.py go ahead modify the packages we’re going to use: Embedded content: https://gist.github.com/nfarah86/a49cbaa033239c636ef4f3bbe1dca2d0

- Timeloop a library that can run jobs at designated intervals.

-

Keep

insert_to_mongo()and add this function inscript.pyto get the weather data: Embedded content: https://gist.github.com/nfarah86/d2e3cc9236547e2fa630fd368dfee994- That

latandloncorrespond to Beijing.

- That

- Now, we’re going to add this function to get the air quality: Embedded content: https://gist.github.com/nfarah86/c598dbea0274d43215f15c9f01eca672

- We’ll modify

insert_to_mongo()to look like this: Embedded content: https://gist.github.com/nfarah86/e43f4ad2d8f7e3ca4b8d761408bc853c - To make sure we’re running continuously, write this: Embedded content: https://gist.github.com/nfarah86/959d875ad5ffcc08e16e3bf25358385a

- After, write

main()like this: Embedded content: https://gist.github.com/nfarah86/831e295b663aceb93603d9986c815b43 - Here's a gist of what your

script.pyshould look like: Embedded content: https://gist.github.com/nfarah86/85caee5b14639e238e34715094cc5436 -

Now, run:

$ python script.pyto populate MongoDB.

- While the script is running, let’s get started on Rockset.

Let’s get started on Rockset

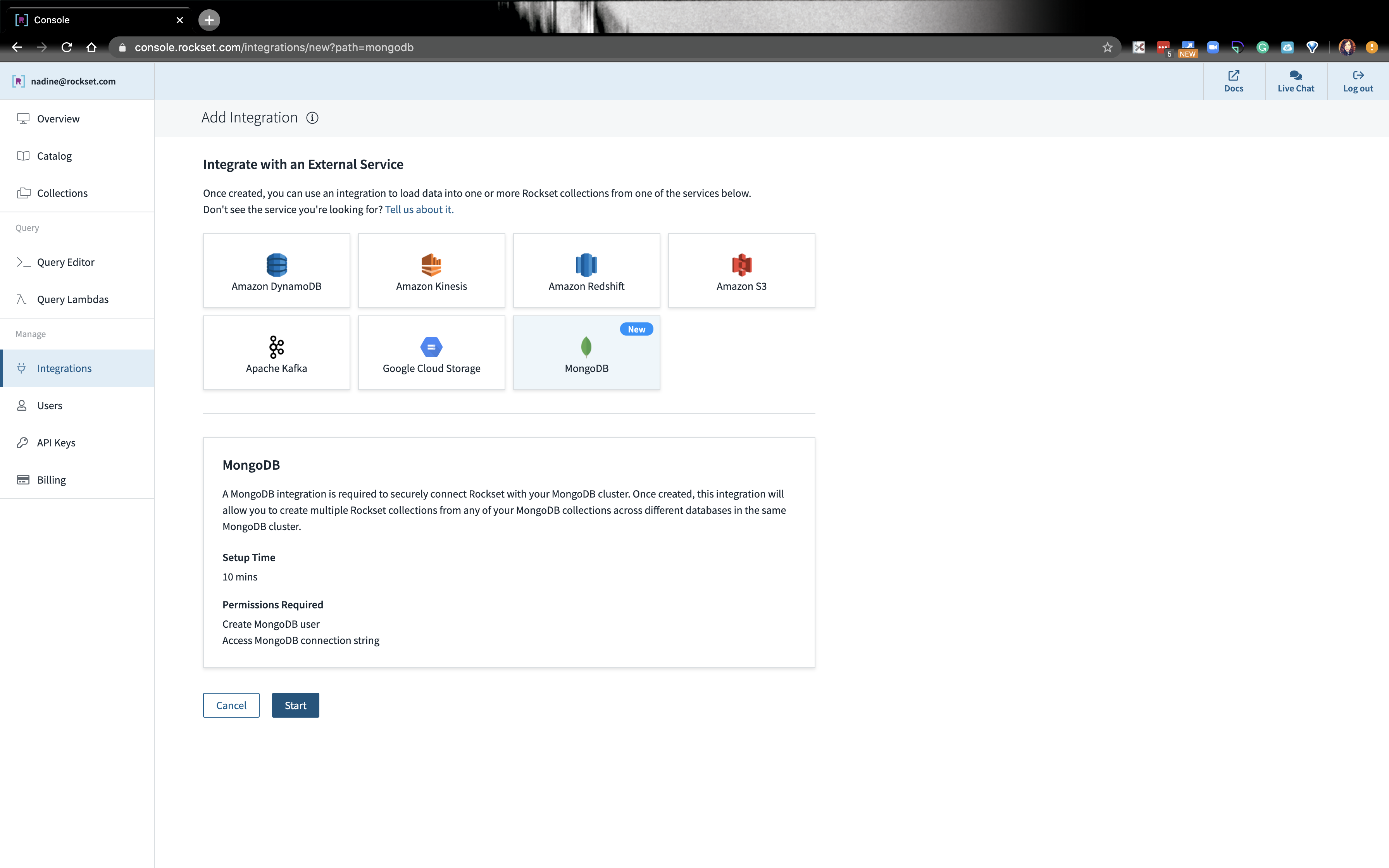

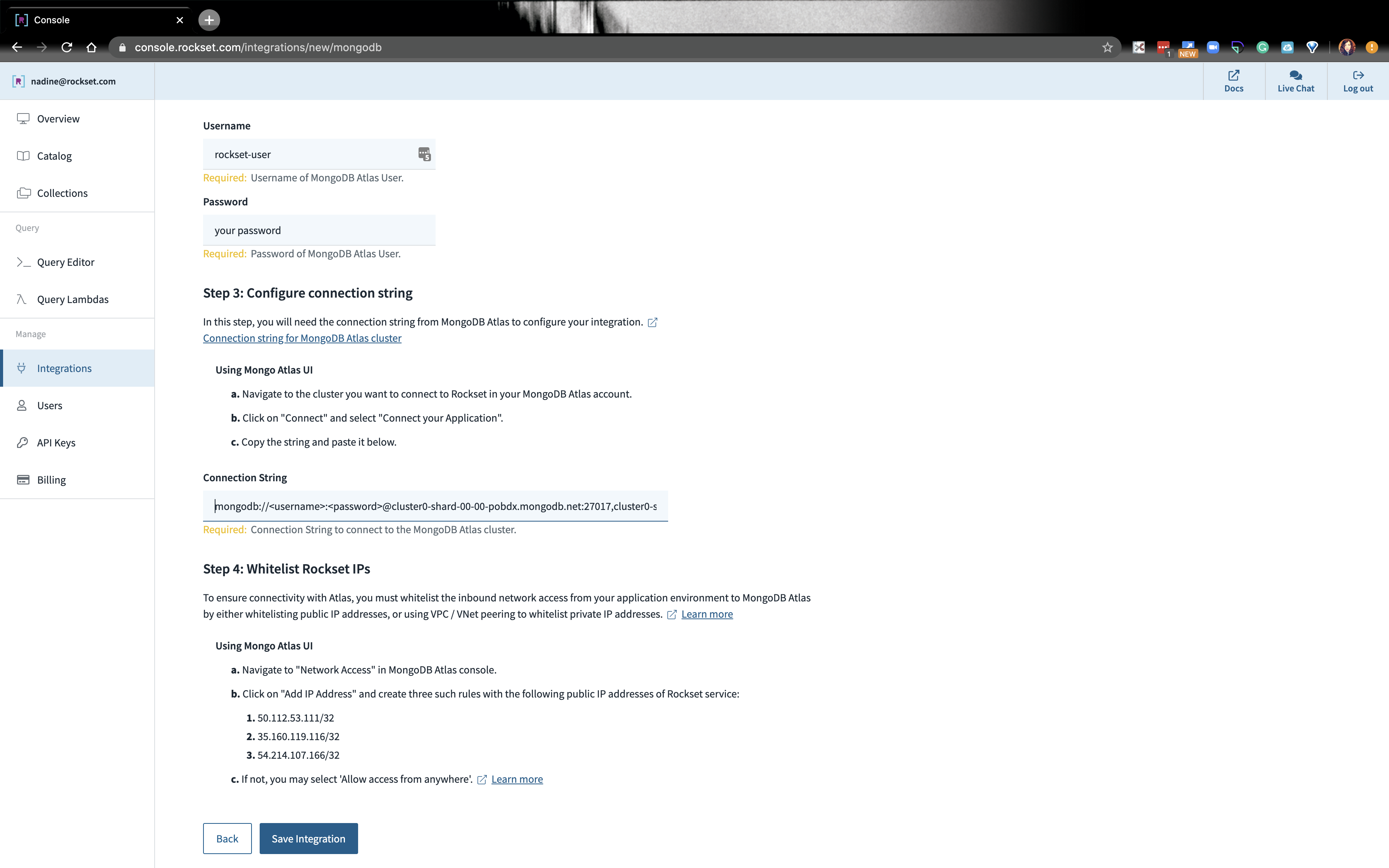

- Login to Rockset and navigate to the Integrations tab on the left. Click on Add Integration. Click on MongDB and click on start:

-

Check the first box MongoDB Atlas. We’re going to name this integration

Rockset-Mongo-Integration. For the username and password, go ahead and putrockset-userand the password you use when you created this database user. Paste the connection string in the next box and click on Save Integration.- Each integration can be used to access multiple databases and collections in the same MongoDB cluster

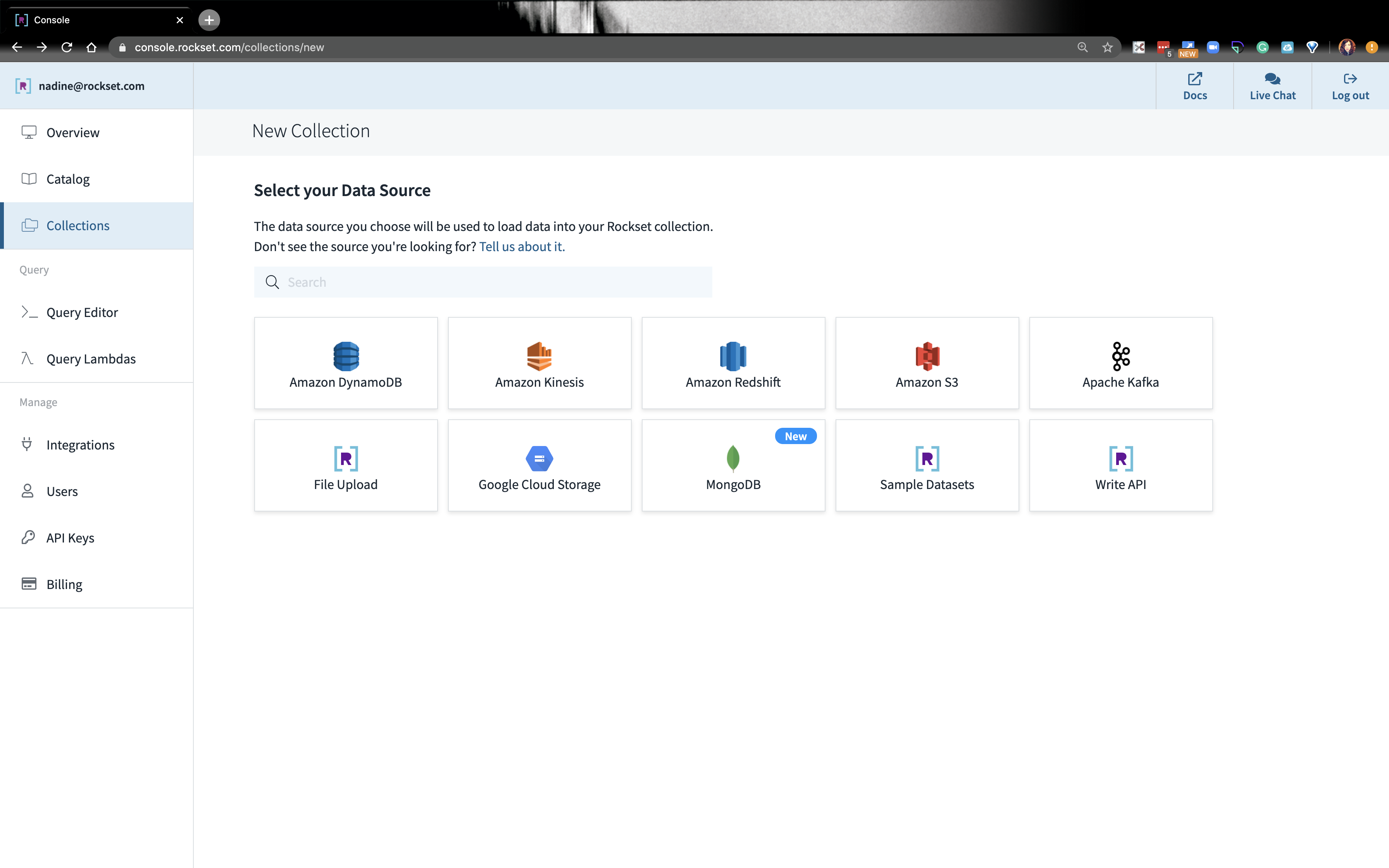

- Under Collections click on Create Collection. Select MongoDB as the source.

- Click on the

rockset-mongo-integration. -

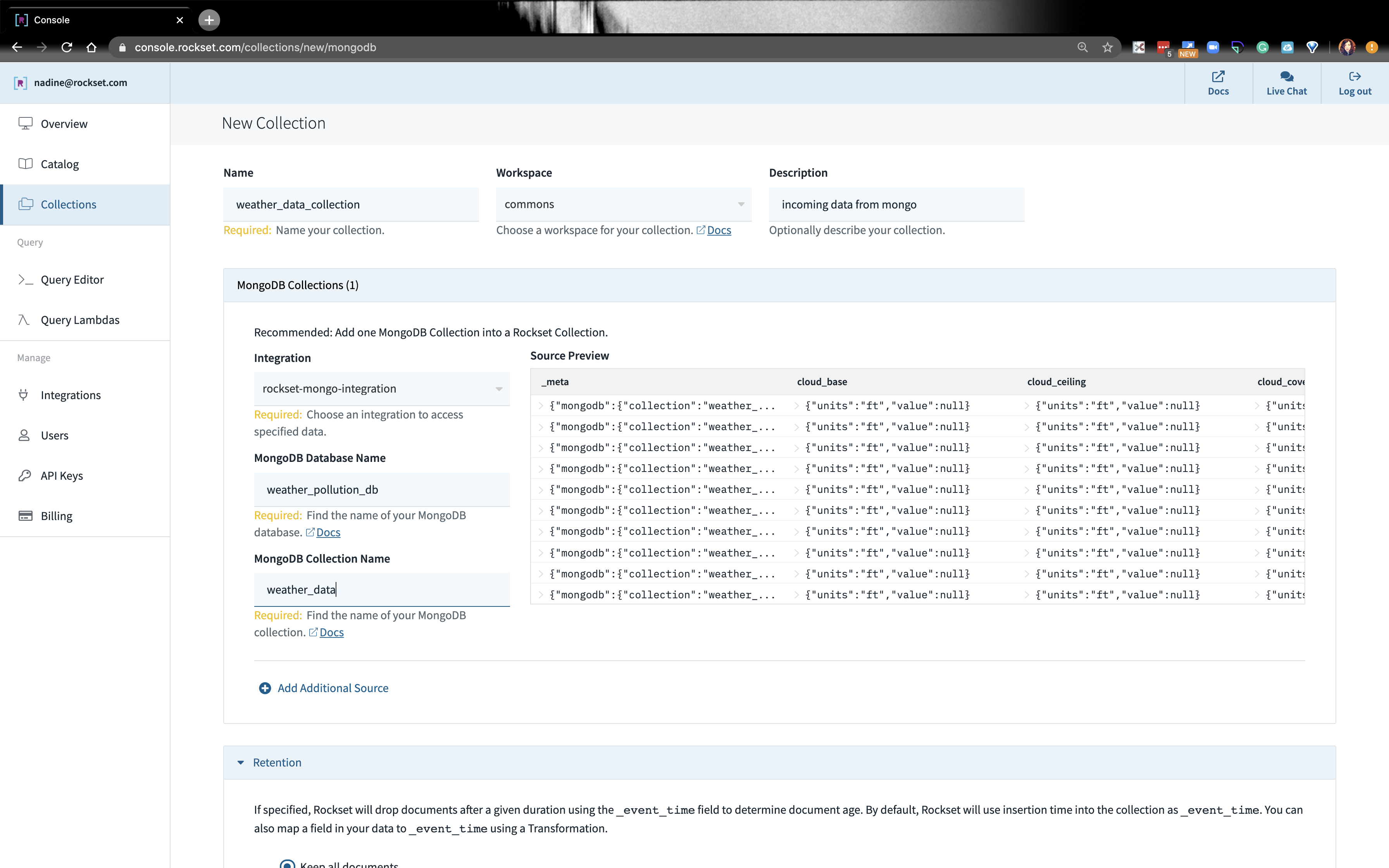

We’re going to name our new collection on Rockset

weather_data_collection. This is not tied to MongoDB. Go ahead and fill out the rest of the page with the database and collection we created on MongoDB. We’re going to add 2 collections, but let’s start with theweather_datafrom MongoDB.- You see, Rockset is able to connect to MongoDB. You can verify what data will be ingested into the Rockset collection on the right-hand side. When you’ve created a collection and running a data-driven app in real-time, Rockset will continuously sync with MongoDB so your data can have the latest information.

- Let’s click Create at the bottom.

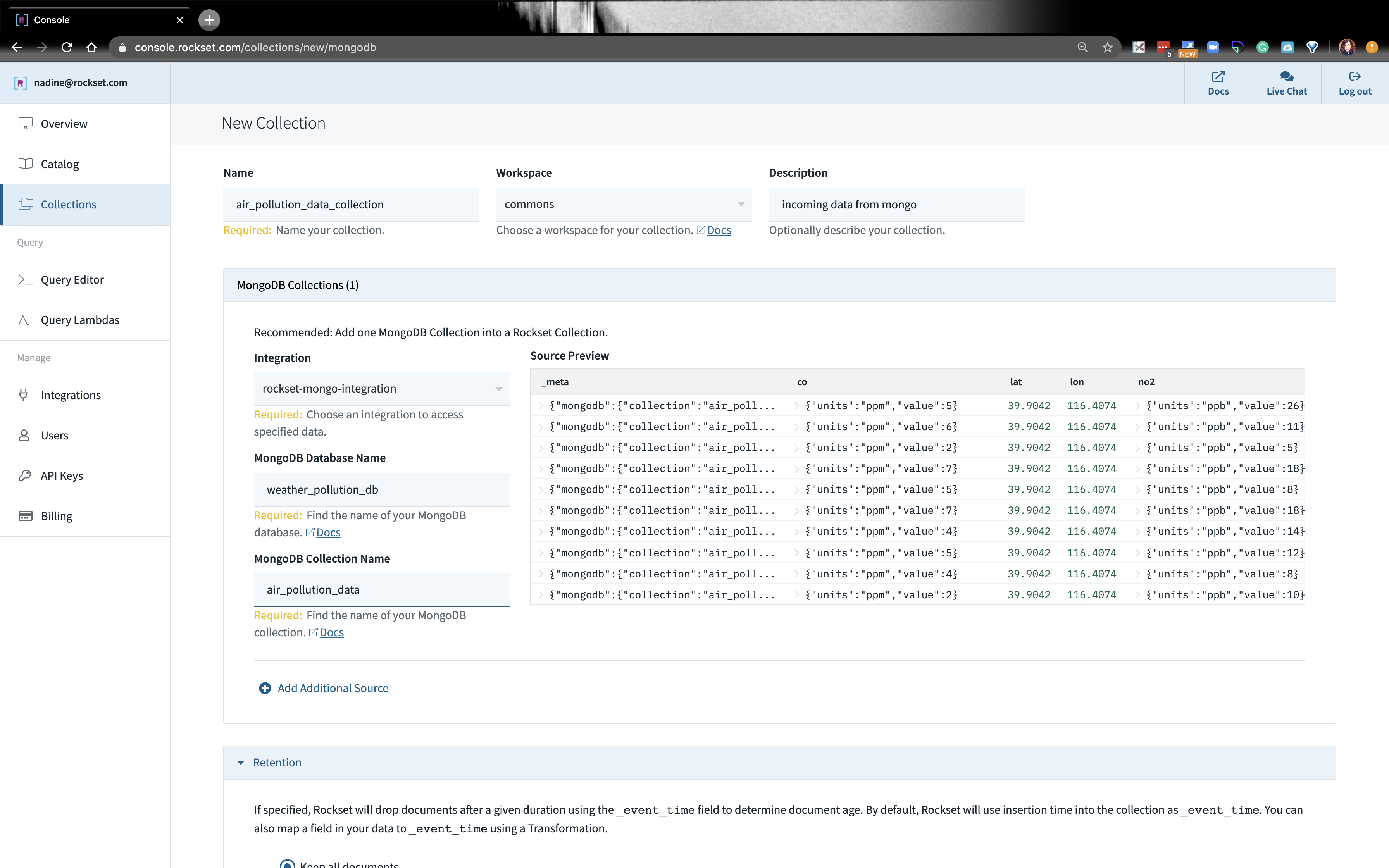

- Follow the same steps, step 3-5, to create the collection,

air_pollution_data_collection. At the end, it should look like this:

- Note permissions can be changed in the MongoDB UI at any time without the need to drop and recreate integration. Except when username and/or password or connection string changes - then the user will need to drop and recreate the Rockst integration

Construct a Query on Rockset

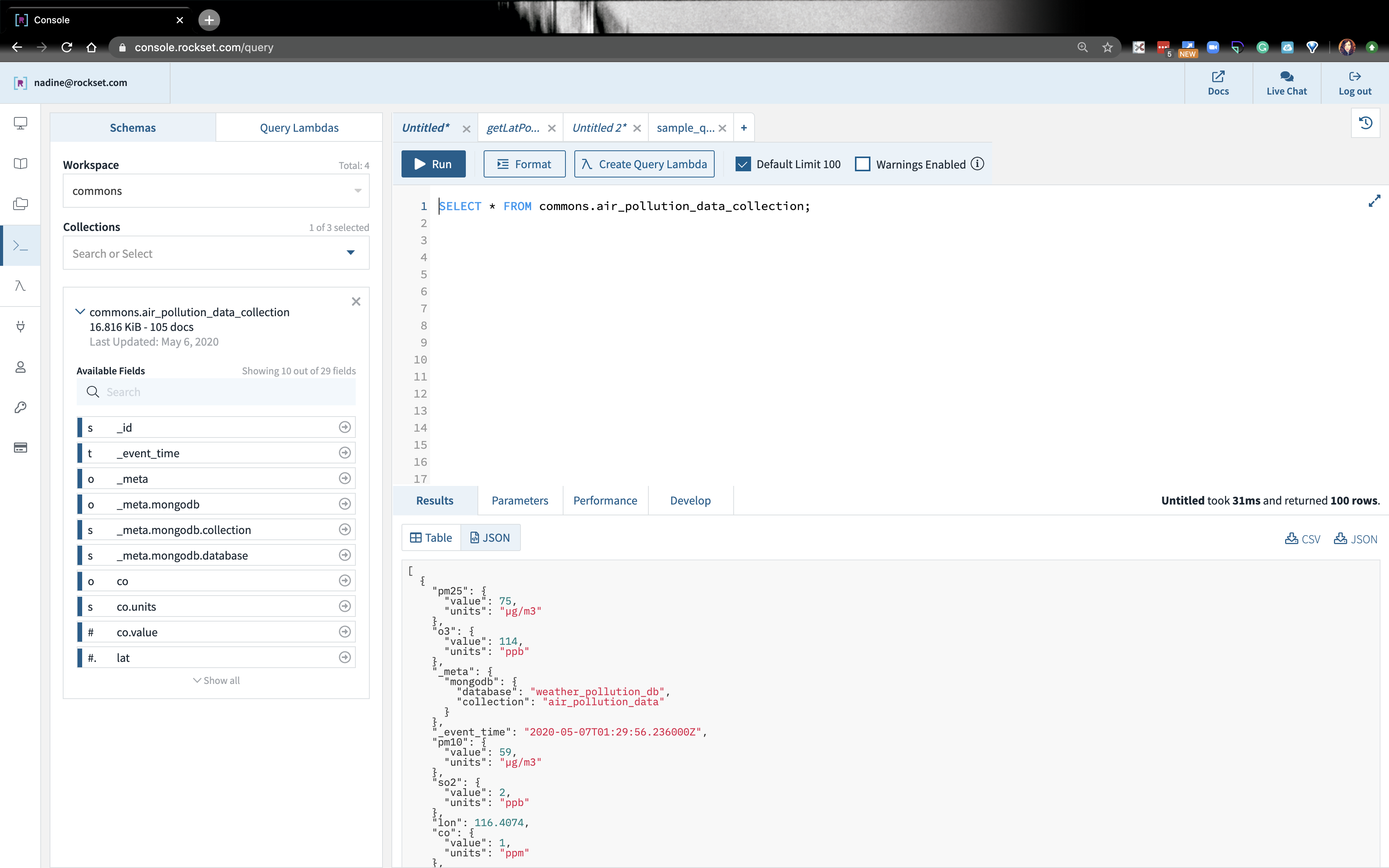

- On the left bar, let’s navigate to the Query Editor.

-

On the tab if we write:

Select * from commons.air_pollution_data_collection;we should see some output:

- Go ahead and do this for the `weather_data_collection`

-

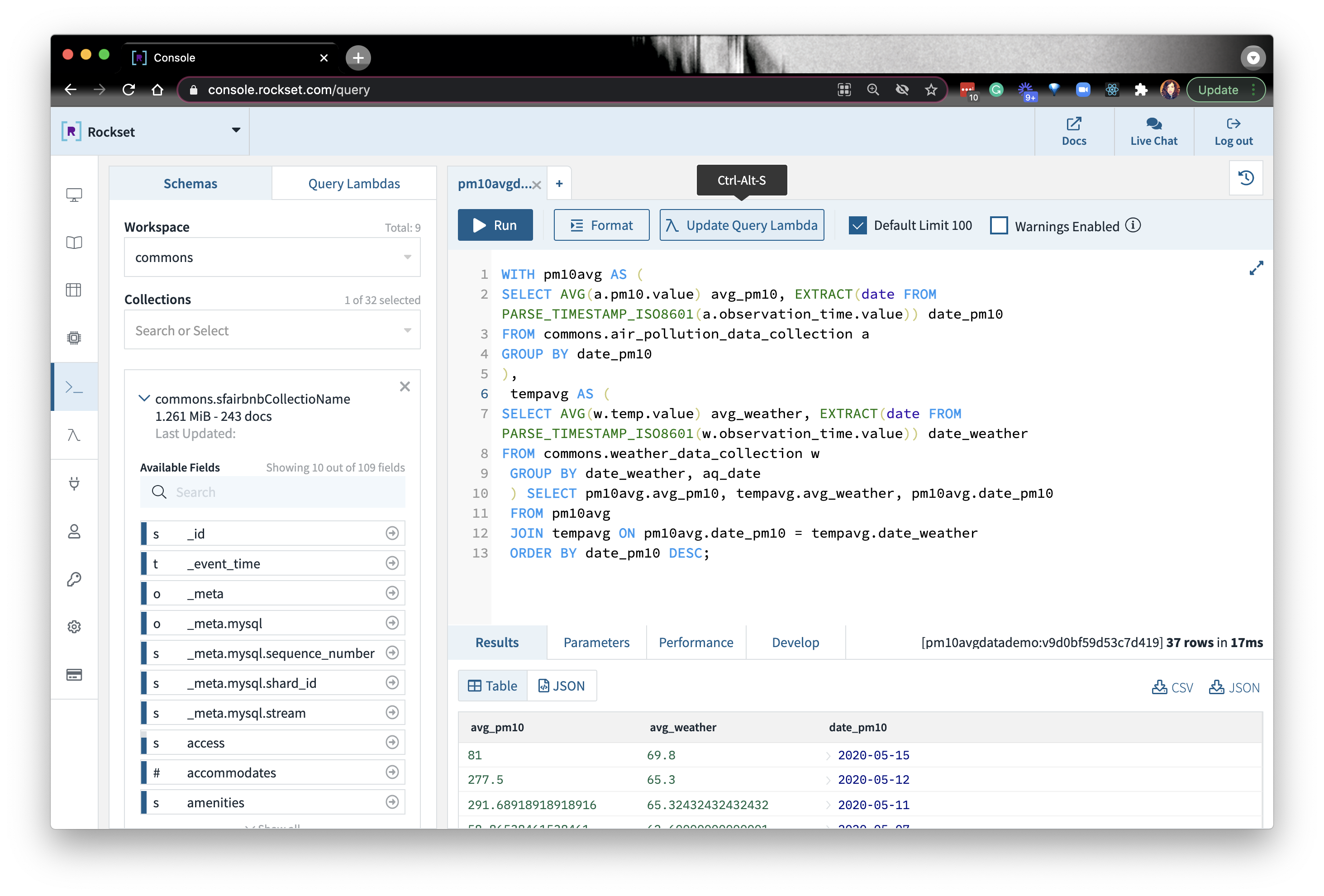

We’re going to write this sample query: Embedded content: https://gist.github.com/nfarah86/2d9c5bc316d55cfd0fcf17b4ded9141f

- We’re averaging the PM10 data and the weather temperature data. We’re going to join both of these collections based on the date. If you noticed the timestamp in the JSON, the date is in ISO 8601 format. In order to join on the days (and eliminates the minutes, hours, and seconds), we’re going to do an extraction.

- Run the query.

-

After we run this query, we want to embed it in our app, so we can notify our users when the levels fluctuate, and potentially predict, based on weather, what PM10 levels may look like the next day.

- We’re going to need a lot more data than what we have now to predict based on temperature, but this is a start!

Build an API on our query on Rockset

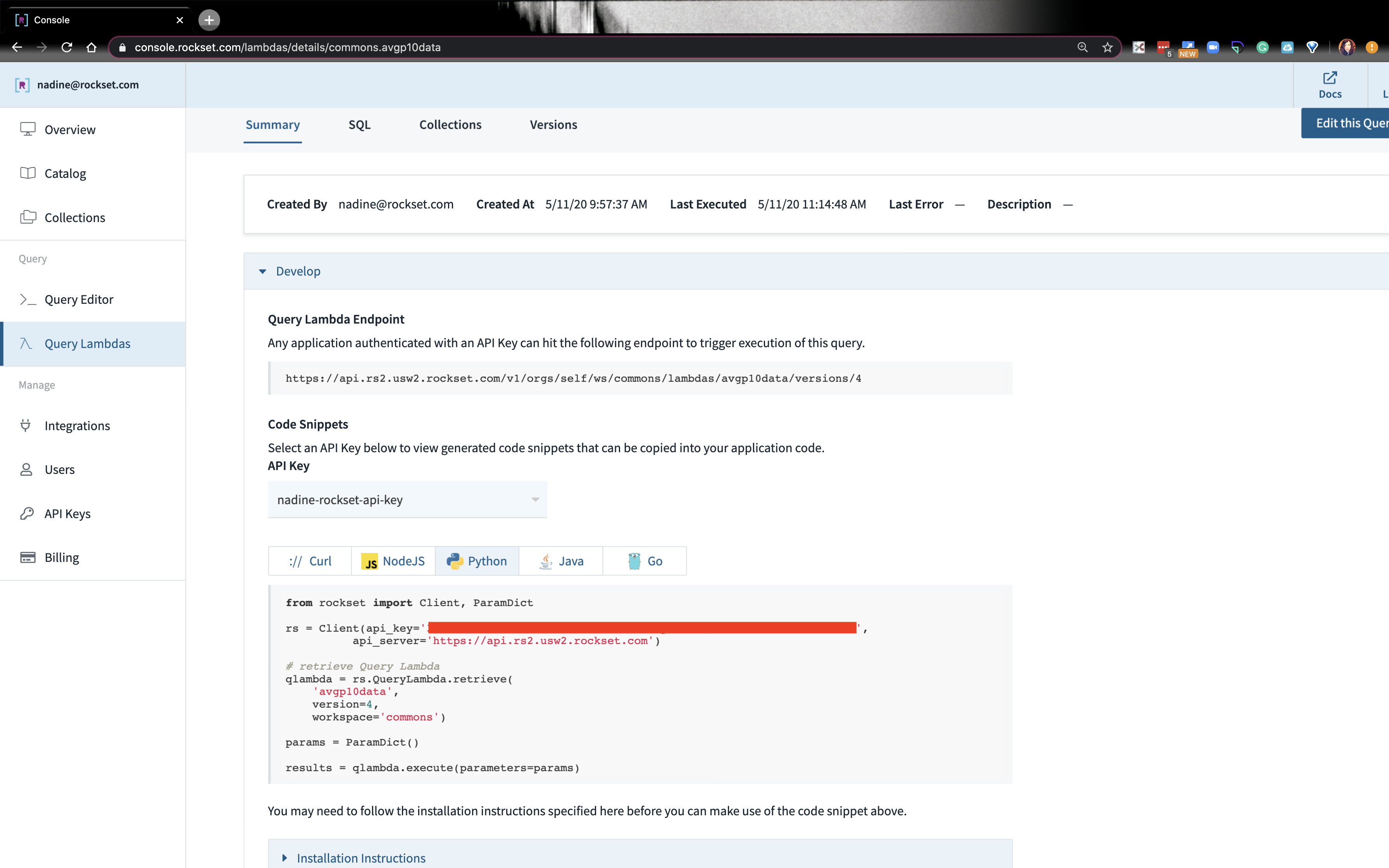

- On the top corner, click on Create Query Lambda. A Query Lambda is a way to make an API endpoint to the SQL query you write. In the Python app, we won’t have to write client-side SQL, preventing security vulnerabilities.

- Give your Query Lambda a name and description. Afterwards, you should see some code snippets on the next screen.

- Let’s navigate back on the Query Editor and write another query to get current weather in a new tab. Sometimes we may get a null field, so let’s go ahead and write this in the Query Editor: Embedded content: https://gist.github.com/nfarah86/4581c6bc09d30045ae75a5f330a8d72f

- Create another new Query Lambda.

- If we want to grab the code snippet or URL of the Query Lambdas we just created, navigate on the left side menu to Query Lambda and click on the lambda you created.

Execute APIs on our app

- Once you create a Query Lambda, you’ll see something like this:

-

There are two ways I’ll show how we can execute a lambda:

- Make an HTTP Request

- Rockset's Python client (bottom box where my API is boxed out)

-

Make an HTTP Request:

- Let’s go ahead and make an HTTP request to get the

current_weatherdata. Here are the steps to do this: - Go ahead and set your

ROCKSET_API_KEYin your.env. Import it insettings.pylike we did before. - On Rockset, navigate to the Query Lambda that has the

current_weatherquery. Copy the query lambda endpoint. - We’re going to write this function that will make an HTTP request to that endpoint:Embedded content: https://gist.github.com/nfarah86/3a0ef9b1524532247e3ea7c504489d23

- Let’s go ahead and make an HTTP request to get the

-

Use the Rockset Client to send a request:

$ pip install rockset- On Rockset, navigate to the Query Lambda that has the average PM10 query.

- Copy the Python code snippet at the bottom.

- In our app, go ahead and add this to the top of

script.pyand write this function: Embedded content: https://gist.github.com/nfarah86/cef26bc4d631e95ffbfe4ddbbfbc7668

- Then, we’re going to display the result:Embedded content: https://gist.github.com/nfarah86/a0d1e15319bc117ef55ce35187fb6480

- We’re going to change

sample_job_every_120s()to addmake_requestsso we can execute the Query Lambdas and display the data:Embedded content: https://gist.github.com/nfarah86/0a54e082c9026aa5c9940b24836d9c65 - Write make_requests() so it looks like this:Embedded content: https://gist.github.com/nfarah86/dea06329b25887bb58a0ef74c4a12fb0

- After you run the script, you should see this:Embedded content: https://gist.github.com/nfarah86/32b35bd3269fbd1701dc57252fa783e4

- That’s it! This wraps it up for the MongoDB-Rockset Python App!

Project Code

You can find the full project, including the SQL statements here. If you have questions about the project, Rockset, or MongoDB, you can reach out in our community.

Resources:

- https://www.airnow.gov/aqi/aqi-basics/

- https://www.airnow.gov/aqi/aqi-basics/using-air-quality-index/

- Find the project code

Other MongoDB resources: