Understanding DynamoDB Secondary Indexes

February 22, 2024

Introduction

Indexes are a crucial part of proper data modeling for all databases, and DynamoDB is no exception. DynamoDB's secondary indexes are a powerful tool for enabling new access patterns for your data.

In this post, we'll look at DynamoDB secondary indexes. First, we'll start with some conceptual points about how to think about DynamoDB and the problems that secondary indexes solve. Then, we'll look at some practical tips for using secondary indexes effectively. Finally, we'll close with some thoughts on when you should use secondary indexes and when you should look for other solutions.

Let's get started.

What is DynamoDB, and what are DynamoDB secondary indexes?

Before we get into use cases and best practices for secondary indexes, we should first understand what DynamoDB secondary indexes are. And to do that, we should understand a bit about how DynamoDB works.

This assumes some basic understanding of DynamoDB. We'll cover the basic points you need to know to understand secondary indexes, but if you're new to DynamoDB, you may want to start with a more basic introduction.

The Bare Minimum you Need to Know about DynamoDB

DynamoDB is a unique database. It's designed for OLTP workloads, meaning it's great for handling a high volume of small operations -- think of things like adding an item to a shopping cart, liking a video, or adding a comment on Reddit. In that way, it can handle similar applications as other databases you might have used, like MySQL, PostgreSQL, MongoDB, or Cassandra.

DynamoDB's key promise is its guarantee of consistent performance at any scale. Whether your table has 1 megabyte of data or 1 petabyte of data, DynamoDB wants to have the same latency for your OLTP-like requests. This is a big deal -- many databases will see reduced performance as you increase the amount of data or the number of concurrent requests. However, providing these guarantees requires some tradeoffs, and DynamoDB has some unique characteristics that you need to understand to use it effectively.

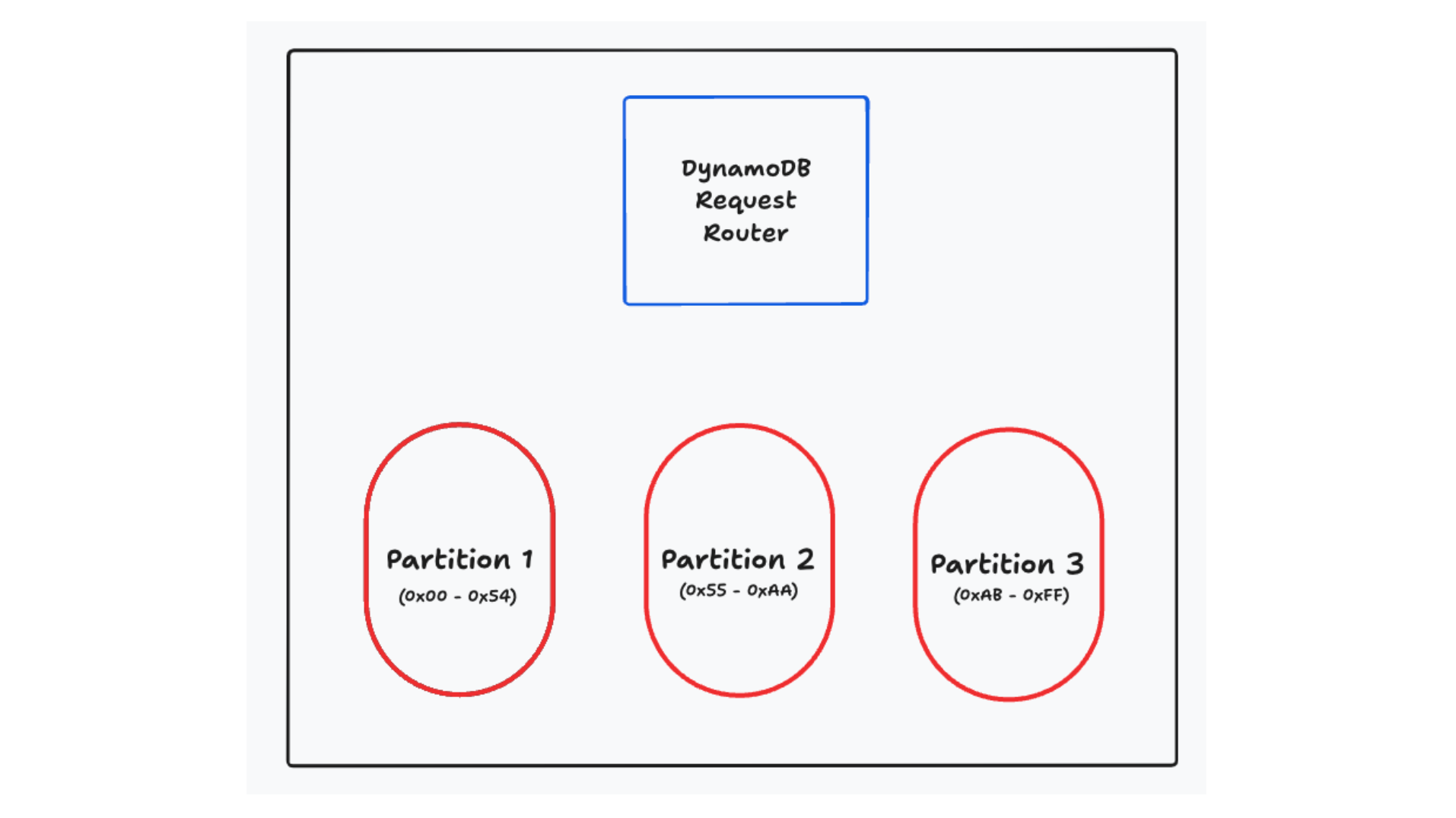

First, DynamoDB horizontally scales your databases by spreading your data across multiple partitions under the hood. These partitions are not visible to you as a user, but they are at the core of how DynamoDB works. You will specify a primary key for your table (either a single element, called a 'partition key', or a combination of a partition key and a sort key), and DynamoDB will use that primary key to determine which partition your data lives on. Any request you make will go through a request router that will determine which partition should handle the request. These partitions are small -- generally 10GB or less -- so they can be moved, split, replicated, and otherwise managed independently.

Horizontal scalability via sharding is interesting but is by no means unique to DynamoDB. Many other databases -- both relational and non-relational -- use sharding to horizontally scale. However, what is unique to DynamoDB is how it forces you to use your primary key to access your data. Rather than using a query planner that translates your requests into a series of queries, DynamoDB forces you to use your primary key to access your data. You are essentially getting a directly addressable index for your data.

The API for DynamoDB reflects this. There are a series of operations on individual items (GetItem, PutItem, UpdateItem, DeleteItem) that allow you to read, write, and delete individual items. Additionally, there is a Query operation that allows you to retrieve multiple items with the same partition key. If you have a table with a composite primary key, items with the same partition key will be grouped together on the same partition. They will be ordered according to the sort key, allowing you to handle patterns like "Fetch the most recent Orders for a User" or "Fetch the last 10 Sensor Readings for an IoT Device".

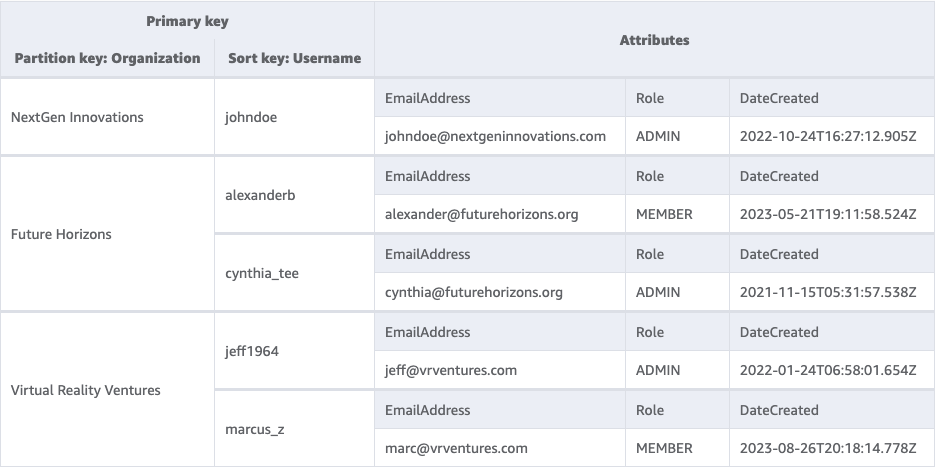

For example, let's imagine a SaaS application that has a table of Users. All Users belong to a single Organization. We might have a table that looks as follows:

We're using a composite primary key with a partition key of 'Organization' and a sort key of 'Username'. This allows us to do operations to fetch or update an individual User by providing their Organization and Username. We can also fetch all of the Users for a single Organization by providing just the Organization to a Query operation.

What are secondary indexes, and how do they work

With some basics in mind, let's now look at secondary indexes. The best way to understand the need for secondary indexes is to understand the problem they solve. We've seen how DynamoDB partitions your data according to your primary key and how it pushes you to use the primary key to access your data. That's all well and good for some access patterns, but what if you need to access your data in a different way?

In our example above, we had a table of users that we accessed by their organization and username. However, we may also need to fetch a single user by their email address. This pattern doesn't fit with the primary key access pattern that DynamoDB pushes us towards. Because our table is partitioned by different attributes, there's not a clear way to access our data in the way we want. We could do a full table scan, but that's slow and inefficient. We could duplicate our data into a separate table with a different primary key, but that adds complexity.

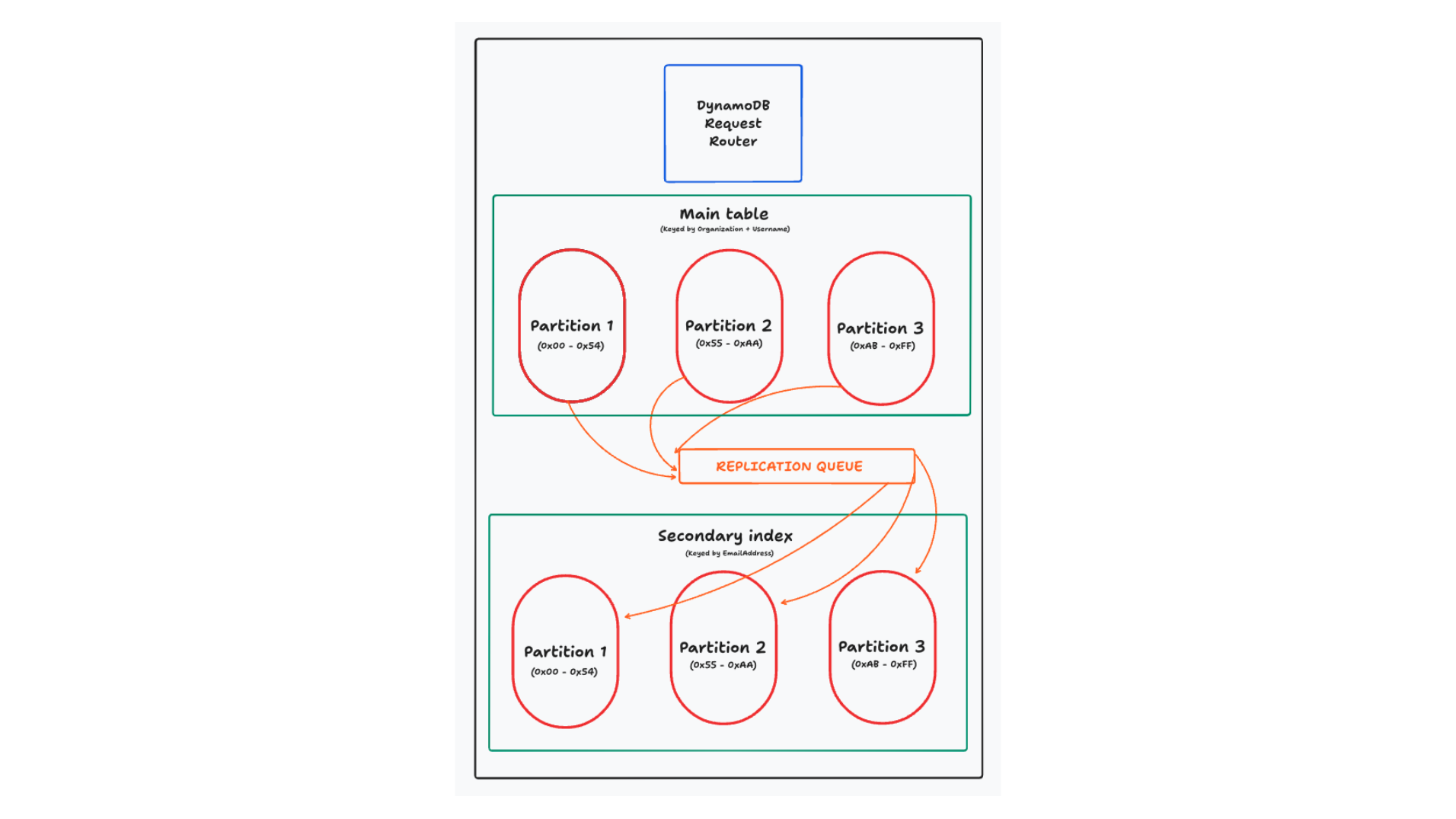

This is where secondary indexes come in. A secondary index is basically a fully managed copy of your data with a different primary key. You will specify a secondary index on your table by declaring the primary key for the index. As writes come into your table, DynamoDB will automatically replicate the data to your secondary index.

Note: Everything in this section applies to global secondary indexes. DynamoDB also provides local secondary indexes, which are a bit different. In almost all cases, you will want a global secondary index. For more details on the differences, check out this article on choosing a global or local secondary index.

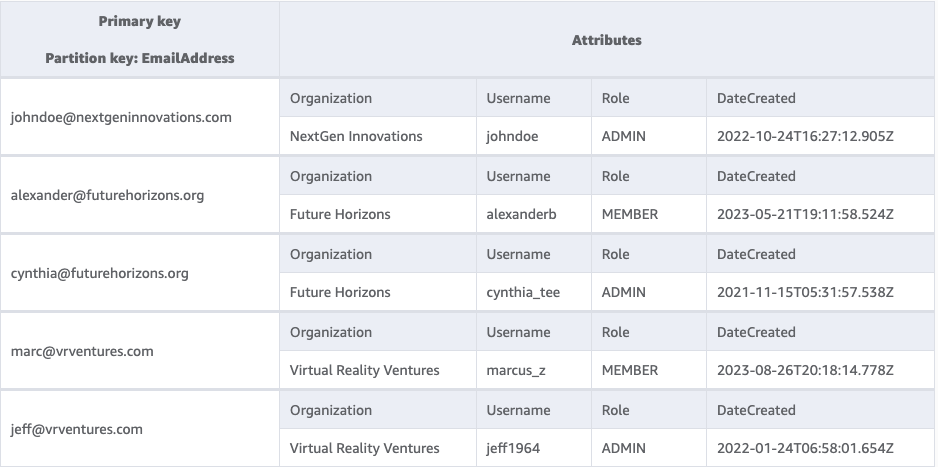

In this case, we'll add a secondary index to our table with a partition key of "Email". The secondary index will look as follows:

Notice that this is the same data, it has just been reorganized with a different primary key. Now, we can efficiently look up a user by their email address.

In some ways, this is very similar to an index in other databases. Both provide a data structure that is optimized for lookups on a particular attribute. But DynamoDB's secondary indexes are different in a few key ways.

First, and most importantly, DynamoDB's indexes live on entirely different partitions than your main table. DynamoDB wants every lookup to be efficient and predictable, and it wants to provide linear horizontal scaling. To do this, it needs to reshard your data by the attributes you'll use to query it.

In other distributed databases, they generally don't reshard your data for the secondary index. They'll usually just maintain the secondary index for all data on the shard. However, if your indexes don't use the shard key, you're losing some of the benefits of horizontally scaling your data as a query without the shard key will need to do a scatter-gather operation across all shards to find the data you're looking for.

A second way that DynamoDB's secondary indexes are different is that they (often) copy the entire item to the secondary index. For indexes on a relational database, the index will often contain a pointer to the primary key of the item being indexed. After locating a relevant record in the index, the database will then need to go fetch the full item. Because DynamoDB's secondary indexes are on different nodes than the main table, they want to avoid a network hop back to the original item. Instead, you'll copy as much data as you need into the secondary index to handle your read.

Secondary indexes in DynamoDB are powerful, but they have some limitations. First off, they are read-only -- you can't write directly to a secondary index. Rather, you will write to your main table, and DynamoDB will handle the replication to your secondary index. Second, you are charged for the write operations to your secondary indexes. Thus, adding a secondary index to your table will often double the total write costs for your table.

Tips for using secondary indexes

Now that we understand what secondary indexes are and how they work, let's talk about how to use them effectively. Secondary indexes are a powerful tool, but they can be misused. Here are some tips for using secondary indexes effectively.

Try to have read-only patterns on secondary indexes

The first tip seems obvious -- secondary indexes can only be used for reads, so you should aim to have read-only patterns on your secondary indexes! And yet, I see this mistake all the time. Developers will first read from a secondary index, then write to the main table. This results in extra cost and extra latency, and you can often avoid it with some upfront planning.

If you've read anything about DynamoDB data modeling, you probably know that you should think of your access patterns first. It's not like a relational database where you first design normalized tables and then write queries to join them together. In DynamoDB, you should think about the actions your application will take, and then design your tables and indexes to support those actions.

When designing my table, I like to start with the write-based access patterns first. With my writes, I'm often maintaining some type of constraint -- uniqueness on a username or a maximum number of members in a group. I want to design my table in a way that makes this straightforward, ideally without using DynamoDB Transactions or using a read-modify-write pattern that could be subject to race conditions.

As you work through these, you'll generally find that there's a 'primary' way to identify your item that matches up with your write patterns. This will end up being your primary key. Then, adding in additional, secondary read patterns is easy with secondary indexes.

In our Users example before, every User request will likely include the Organization and the Username. This will allow me to look up the individual User record as well as authorize specific actions by the User. The email address lookup may be for less prominent access patterns, like a 'forgot password' flow or a 'search for a user' flow. These are read-only patterns, and they fit well with a secondary index.

Use secondary indexes when your keys are mutable

A second tip for using secondary indexes is to use them for mutable values in your access patterns. Let's first understand the reasoning behind it, and then look at situations where it applies.

DynamoDB allows you to update an existing item with the UpdateItem

operation. However, you cannot change the primary key of an item in an update. The primary key is the unique identifier for an item, and changing the primary key is basically creating a new item. If you want to change the primary key of an existing item, you'll need to delete the old item and create a new one. This two-step process is slower and costly. Often you'll need to read the original item first, then use a transaction to delete the original item and create a new one in the same request.

On the other hand, if you have this mutable value in the primary key of a secondary index, then DynamoDB will handle this delete + create process for you during replication. You can issue a simple UpdateItem request to change the value, and DynamoDB will handle the rest.

I see this pattern come up in two main situations. The first, and most common, is when you have a mutable attribute that you want to sort on. The canonical examples here are a leaderboard for a game where people are continually racking up points, or for a continually updating list of items where you want to display the most recently updated items first. Think of something like Google Drive, where you can sort your files by 'last modified'.

A second pattern where this comes up is when you have a mutable attribute that you want to filter on. Here, you can think of an ecommerce store with a history of orders for a user. You may want to allow the user to filter their orders by status -- show me all my orders that are 'shipped' or 'delivered'. You can build this into your partition key or the beginning of your sort key to allow exact-match filtering. As the item changes status, you can update the status attribute and lean on DynamoDB to group the items correctly in your secondary index.

In both of these situations, moving this mutable attribute to your secondary index will save you time and money. You'll save time by avoiding the read-modify-write pattern, and you'll save money by avoiding the extra write costs of the transaction.

Additionally, note that this pattern fits well with the previous tip. It's unlikely you will identify an item for writing based on the mutable attribute like their previous score, their previous status, or the last time they were updated. Rather, you'll update by a more persistent value, like the user's ID, the order ID, or the file's ID. Then, you'll use the secondary index to sort and filter based on the mutable attribute.

Avoid the 'fat' partition

We saw above that DynamoDB divides your data into partitions based on the primary key. DynamoDB aims to keep these partitions small -- 10GB or less -- and you should aim to spread requests across your partitions to get the benefits of DynamoDB's scalability.

This generally means you should use a high-cardinality value in your partition key. Think of something like a username, an order ID, or a sensor ID. There are large numbers of values for these attributes, and DynamoDB can spread the traffic across your partitions.

Often, I see people understand this principle in their main table, but then completely forget about it in their secondary indexes. Often, they want ordering across the entire table for a type of item. If they want to retrieve users alphabetically, they'll use a secondary index where all users have USERS as the partition key and the username as the sort key. Or, if they want ordering of the most recent orders in an ecommerce store, they'll use a secondary index where all orders have ORDERS as the partition key and the timestamp as the sort key.

This pattern can work for small-traffic applications where you won't come close to the DynamoDB partition throughput limits, but it's a dangerous pattern for a high-traffic application. All of your traffic may be funneled to a single physical partition, and you can quickly hit the write throughput limits for that partition.

Further, and most dangerously, this can cause problems for your main table. If your secondary index is getting write throttled during replication, the replication queue will back up. If this queue backs up too much, DynamoDB will start rejecting writes on your main table.

This is designed to help you -- DynamoDB wants to limit the staleness of your secondary index, so it will prevent you from a secondary index with a large amount of lag. However, it can be a surprising situation that pops up when you're least expecting it.

Use sparse indexes as a global filter

People often think of secondary indexes as a way to replicate all of their data with a new primary key. However, you don't need all of your data to end up in a secondary index. If you have an item that doesn't match the index's key schema, it won't be replicated to the index.

This can be really useful for providing a global filter on your data. The canonical example I use for this is a message inbox. In your main table, you might store all the messages for a particular user ordered by the time they were created.

But if you're like me, you have a lot of messages in your inbox. Further, you might treat unread messages as a 'todo' list, like little reminders to get back to someone. Accordingly, I usually only want to see the unread messages in my inbox.

You could use your secondary index to provide this global filter where unread == true. Perhaps your secondary index partition key is something like ${userId}#UNREAD, and the sort key is the timestamp of the message. When you create the message initially, it will include the secondary index partition key value and thus will be replicated to the unread messages secondary index. Later, when a user reads the message, you can change the status to READ and delete the secondary index partition key value. DynamoDB will then remove it from your secondary index.

I use this trick all the time, and it's remarkably effective. Further, a sparse index will save you money. Any updates to read messages will not be replicated to the secondary index, and you'll save on write costs.

Narrow your secondary index projections to reduce index size and/or writes

For our last tip, let's take the previous point a little further. We just saw that DynamoDB won't include an item in your secondary index if the item doesn't have the primary key elements for the index. This trick can be used for not only primary key elements but also for non-key attributes in the data!

When you create a secondary index, you can specify which attributes from the main table you want to include in the secondary index. This is called the projection of the index. You can choose to include all attributes from the main table, only the primary key attributes, or a subset of the attributes.

While it's tempting to include all attributes in your secondary index, this can be a costly mistake. Remember that every write to your main table that changes the value of a projected attribute will be replicated to your secondary index. A single secondary index with full projection effectively doubles the write costs for your table. Each additional secondary index increases your write costs by 1/N + 1, where N is the number of secondary indexes before the new one.

Additionally, your write costs are calculated based on the size of your item. Each 1KB of data written to your table uses a WCU. If you're copying a 4KB item to your secondary index, you'll be paying the full 4 WCUs on both your main table and your secondary index.

Thus, there are two ways that you can save money by narrowing your secondary index projections. First, you can avoid certain writes altogether. If you have an update operation that doesn't touch any attributes in your secondary index projection, DynamoDB will skip the write to your secondary index. Second, for those writes that do replicate to your secondary index, you can save money by reducing the size of the item that is replicated.

This can be a tricky balance to get right. Secondary index projections are not alterable after the index is created. If you find that you need additional attributes in your secondary index, you'll need to create a new index with the new projection and then delete the old index.

Should you use a secondary index?

Now that we've explored some practical advice around secondary indexes, let's take a step back and ask a more fundamental question -- should you use a secondary index at all?

As we've seen, secondary indexes help you access your data in a different way. However, this comes at the cost of the additional writes. Thus, my rule of thumb for secondary indexes is:

Use secondary indexes when the reduced read costs outweigh the increased write costs.

This seems obvious when you say it, but it can be counterintuitive as you're modeling. It seems so easy to say "Throw it in a secondary index" without thinking about other approaches.

To bring this home, let's look at two situations where secondary indexes might not make sense.

Lots of filterable attributes in small item collections

With DynamoDB, you generally want your primary keys to do your filtering for you. It irks me a little whenever I use a Query in DynamoDB but then perform my own filtering in my application -- why couldn't I just build that into the primary key?

Despite my visceral reaction, there are some situations where you might want to over-read your data and then filter in your application.

The most common place you'll see this is when you want to provide a lot of different filters on your data for your users, but the relevant data set is bounded.

Think of a workout tracker. You might want to allow users to filter on a lot of attributes, such as type of workout, intensity, duration, date, and so on. However, the number of workouts a user has is going to be manageable -- even a power user will take a while to exceed 1000 workouts. Rather than putting indexes on all of these attributes, you can just fetch all the user's workouts and then filter in your application.

This is where I recommend doing the math. DynamoDB makes it easy to calculate these two options and get a sense of which one will work better for your application.

Lots of filterable attributes in large item collections

Let's change our situation a bit -- what if our item collection is large? What if we're building a workout tracker for a gym, and we want to allow the gym owner to filter on all of the attributes we mentioned above for all the users in the gym?

This changes the situation. Now we're talking about hundreds or even thousands of users, each with hundreds or thousands of workouts. It won't make sense to over-read the entire item collection and do post-hoc filtering on the results.

But secondary indexes don't really make sense here either. Secondary indexes are good for known access patterns where you can count on the relevant filters being present. If we want our gym owner to be able to filter on a variety of attributes, all of which are optional, we'd need to create a large number of indexes to make this work.

We talked about the possible downsides of query planners before, but query planners have an upside too. In addition to allowing for more flexible queries, they can also do things like index intersections to look at partial results from multiple indexes in composing these queries. You can do the same thing with DynamoDB, but it's going to result in a lot of back and forth with your application, along with some complex application logic to figure it out.

When I have these types of problems, I generally look for a tool better suited for this use case. Rockset and Elasticsearch are my go-to recommendations here for providing flexible, secondary-index-like filtering across your dataset.

Conclusion

In this post, we learned about DynamoDB secondary indexes. First, we looked at some conceptual bits to understand how DynamoDB works and why secondary indexes are needed. Then, we reviewed some practical tips to understand how to use secondary indexes effectively and to learn their specific quirks. Finally, we looked at how to think about secondary indexes to see when you should use other approaches.

Secondary indexes are a powerful tool in your DynamoDB toolbox, but they're not a silver bullet. As with all DynamoDB data modeling, make sure you carefully consider your access patterns and count the costs before you jump in.

Learn more about how you can use Rockset for secondary-index-like filtering in Alex DeBrie's blog DynamoDB Filtering and Aggregation Queries Using SQL on Rockset.