Secondary Indexes For Analytics On DynamoDB

April 29, 2019

In this post I explore how to support analytical queries without encountering prohibitive scan costs, by leveraging secondary indexes in DynamoDB. I also evaluate the pros and cons of this approach in contrast to extracting data to another system like Athena, Spark or Elastic.

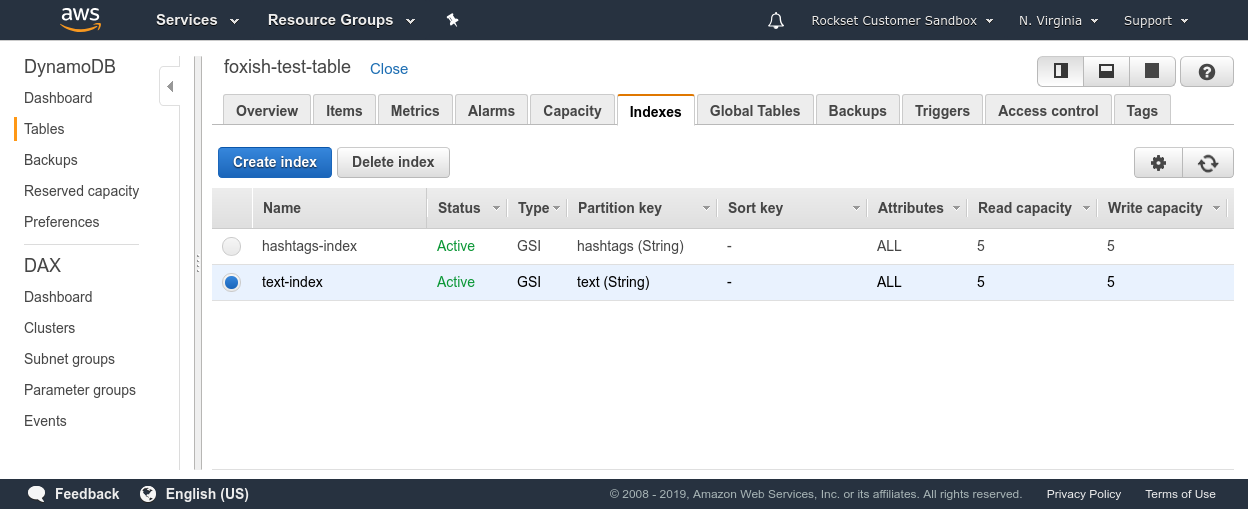

Rockset recently added support for DynamoDB - which basically means you can run fast SQL on DynamoDB tables without any ETL. As I spoke to our users, I came across different ways in which global secondary indexes (GSI) are used for analytical queries.

DynamoDB stores data under the hood by partitioning it over a large number of nodes based on a user-specified partition key field present in each item. This user-specified partition key can be optionally combined with a sort key to represent a primary key. The primary key acts as an index, making query operations on it inexpensive. A query operation can do equality comparison (=) on the partition key and comparative operations (>, <, =, BETWEEN) on the sort key if specified. Performing operations that are not covered by the above scheme requires the use of a scan operation, which is typically executed by scanning over the entire DynamoDB table in parallel. These scans can be slow and expensive in terms of Read Capacity Units (RCUs) because they require a full read of the entire table. Scans also tend to slow down when the table size grows as there is more data to scan to produce results.

If we want to support analytical queries without encountering prohibitive scan costs, we can leverage secondary indexes in DynamoDB. Secondary indexes also consist of creating partition keys and optional sort keys over fields that we want to query over in much the same way as the primary key. Secondary indexes are often used to improve application performance by indexing fields which are queried very often. Query operations on secondary indexes can also be used to power specific features through analytic queries that have clearly defined requirements—like computing a leaderboard in a game. One clear advantage of this approach of performing analytical queries is that there is no need for any other system.

However, it is infeasible to use this approach for a wider range of analytical queries because of the limited types of queries it supports. The full gamut of analytics requires filtering on multiple fields, grouping, ordering, joining data between data sets, etc., which cannot be achieved simply through secondary indexes. Secondary indexes that can be created are also limited in number and require some planning to ensure that they scale well with the data. A badly chosen partition key can worsen performance and increase costs significantly. Data in DynamoDB can have a nested structure including arrays and objects, but indexes can only be built on certain primitive types. This can force denormalizing of the data to flatten nested objects and arrays in order to build secondary indexes, which could potentially explode the number of writes performed and associated costs. Apart from cost and flexibility, there are also security and performance considerations when it comes to supporting analytic use cases on an operational data store in a production environment.

Advantages

- No additional setup outside DynamoDB

- Fast and scalable serving for basic analytical queries over indexed fields

Disadvantages

- Expensive when queries require scans over DynamoDB

- Very limited support for analytical queries over indexes; no SQL queries, grouping, or joins

- Cannot set up indexes on nested fields without denormalizing data and exploding out writes

- Security and performance implications of running analytical queries on an operational database

This approach may be suitable if we have an application that requires a specific feature that is simple enough to be realized using a query over an index. The increased storage and I/O cost and the limited query ability make it unsuitable for the wider range of analytical queries otherwise. Therefore, for a majority of analytic use cases, it is cost effective to export the data from DynamoDB into a different system that allows us to query with higher fidelity.

If you are considering extracting data to another system, there are several different options for real-time analytics:

- DynamoDB + Glue + S3 + Athena

- DynamoDB + Hive/Spark

- DynamoDB + AWS Lambda + Elasticsearch

- DynamoDB + Rockset

I compare each of these in terms of ease of setup, maintenance, query capability, latency in my other blog post Analytics on DynamoDB: Comparing Athena, Spark and Elastic, where I also evaluate which use cases each of them are best suited for.

Other DynamoDB resources:

- DynamoDB Filtering and Aggregation Queries Using SQL on Rockset

- Real-Time Analytics on DynamoDB - Using DynamoDB Streams with Lambda and ElastiCache

- Tableau Operational Dashboards and Reporting on DynamoDB - Evaluating Redshift and Athena

- Using Tableau with DynamoDB: How to Build a Real-Time SQL Dashboard on NoSQL Data